Introduction Artificial Intelligence has evolved rapidly over the past few years, transforming industries, workflows, and digital experiences. Among the most talked-about technologies today are AI Agents and Generative AI. While many people use these terms interchangeably, they represent two distinct categories of artificial intelligence with different purposes, capabilities, and business impacts. Generative AI became globally recognized through tools like OpenAI’s ChatGPT, image generators, and AI-powered content creation platforms. Meanwhile, AI agents are emerging as autonomous systems capable of reasoning, planning, decision-making, and executing tasks with minimal human intervention. Understanding the difference between AI agents and generative AI is essential for businesses, developers, and organizations looking to implement modern AI solutions effectively. In this comprehensive guide, we will explore: What generative AI is What AI agents are Core differences between the two Real-world applications Advantages and limitations How they work together Future trends shaping AI automation What Is Generative AI? Generative AI refers to artificial intelligence systems designed to create new content based on patterns learned from massive datasets. These systems generate outputs such as: Text Images Audio Videos Code Designs Popular examples include: OpenAI ChatGPT Google Gemini Anthropic Claude Midjourney Adobe Firefly Generative AI models rely heavily on deep learning architectures such as: Large Language Models (LLMs) Diffusion Models Transformer Networks Generative Adversarial Networks (GANs) These systems predict the next word, pixel, sound, or pattern based on training data. How Generative AI Works Generative AI models are trained using enormous datasets containing billions of examples. During training, the AI learns: Language structures Semantic relationships Visual patterns Coding syntax User behavior patterns For example, a text-based generative AI model predicts the most likely next word in a sentence. If a user asks: “Write a marketing email for a SaaS product” The AI generates content based on statistical patterns learned during training. Main Features of Generative AI 1. Content Creation Generative AI excels at producing: Blog articles Social media posts Product descriptions Images Marketing campaigns Source code 2. Human-Like Responses Modern LLMs simulate conversational interactions with impressive fluency. 3. Creativity Enhancement Generative AI supports brainstorming, ideation, and design generation. 4. Fast Output Generation Tasks that once took hours can now be completed in seconds. 5. Multimodal Capabilities Many advanced models process: Text Images Audio Video simultaneously What Are AI Agents? AI agents are autonomous systems that can: Observe environments Analyze situations Make decisions Plan actions Execute tasks Learn from feedback Unlike generative AI, which primarily creates content, AI agents are designed to act independently toward achieving goals. AI agents can integrate: LLMs APIs Databases Software tools Automation workflows Memory systems Their primary objective is task execution rather than content generation alone. How AI Agents Work AI agents typically operate using a loop: Observe Reason Plan Act Evaluate Repeat For example, an AI customer support agent may: Read incoming tickets Categorize requests Search company databases Draft responses Escalate complex issues Update CRM systems All with minimal human intervention. Core Components of AI Agents 1. Reasoning Engine Determines what actions to take. 2. Memory Stores previous interactions and context. 3. Planning System Breaks goals into smaller executable steps. 4. Tool Integration Uses external software, APIs, and applications. 5. Autonomous Decision-Making Acts independently based on objectives. AI Agents vs Generative AI: Key Differences AI Agents vs Generative AI Comparison of major capabilities between AI agents and generative AI systems. Feature Generative AI AI Agents Primary Purpose Content generation Autonomous task execution Human Dependency High Lower Memory Limited Persistent memory possible Decision-Making Minimal Advanced Tool Usage Usually standalone Integrates tools & APIs Workflow Automation Limited Extensive Autonomy Reactive Proactive Goal-Oriented Sometimes Strongly goal-driven Real-World Examples of Generative AI Content Marketing Businesses use generative AI for: SEO blogs Email campaigns Ad copy Product descriptions Software Development AI coding assistants generate: Code snippets Documentation Bug fixes Test cases Examples include: GitHub Copilot OpenAI Codex Design and Media AI-generated visuals, videos, and audio are transforming creative industries. Customer Support Chatbots powered by generative AI answer customer questions in natural language. Real-World Examples of AI Agents Autonomous Customer Support Agents AI agents can: Resolve tickets Access databases Trigger workflows Schedule follow-ups AI Research Agents Agents gather information from multiple sources and summarize findings automatically. Sales Automation Agents AI agents can: Qualify leads Send outreach emails Update CRMs Schedule meetings Software Engineering Agents Advanced coding agents can: Write code Run tests Debug applications Deploy software Benefits of Generative AI Increased Productivity Teams generate content significantly faster. Lower Operational Costs Automation reduces manual creative workloads. Enhanced Creativity AI assists with ideation and innovation. Scalability Businesses can produce content at scale. Limitations of Generative AI Hallucinations Generative AI may create inaccurate or fabricated information. Lack of True Understanding Models predict patterns rather than truly understanding concepts. Limited Autonomy Most generative AI systems require prompts and human supervision. Context Limitations Long-term memory is often weak or unavailable. Benefits of AI Agents End-to-End Automation AI agents execute complete workflows autonomously. Continuous Learning Agents can improve through feedback and interaction. Operational Efficiency Businesses reduce repetitive manual tasks. Intelligent Decision-Making Agents analyze data and optimize outcomes. Limitations of AI Agents Complexity Building robust AI agents is technically challenging. Security Risks Autonomous systems require strong governance and safeguards. Infrastructure Requirements AI agents often require: APIs Databases Orchestration systems Monitoring frameworks Reliability Concerns Poorly designed agents may make incorrect decisions. How AI Agents and Generative AI Work Together In reality, many advanced AI systems combine both technologies. Generative AI often acts as the “brain” for AI agents by providing: Natural language understanding Content generation Reasoning support Meanwhile, AI agents provide: Autonomy Planning Action execution Workflow management For example: An AI agent receives a customer support request Uses generative AI to draft a response Accesses databases Updates support tickets Sends emails automatically This combination is driving the next wave of intelligent automation. Industries Adopting AI Agents and Generative AI Healthcare Hospitals use AI for: Medical documentation Diagnostic assistance Patient support automation Finance Banks deploy AI for: Fraud detection Financial analysis Customer service automation E-Commerce Retailers use AI for:

Introduction Artificial Intelligence has transformed the way machines perceive and interact with the world. From autonomous vehicles to smart surveillance systems, object detection models play a crucial role in enabling machines to recognize and understand visual data. Among the most influential families of object detection algorithms is the YOLO series, which stands for “You Only Look Once.” Over the years, YOLO models have become synonymous with speed, efficiency, and accuracy. However, traditional YOLO systems were limited to detecting predefined object categories. If a model was not trained on a specific object class, it could not recognize it. This limitation led researchers to develop more advanced solutions capable of recognizing unseen objects using textual descriptions. One of the most exciting breakthroughs in this field is the YOLO-World model — an open-vocabulary real-time object detection framework that bridges the gap between vision and language understanding. YOLO-World combines the speed of the YOLO family with the flexibility of vision-language models, enabling AI systems to detect virtually any object described by text prompts without requiring retraining. In this comprehensive guide, we will explore everything about YOLO-World, including its architecture, working mechanism, advantages, challenges, use cases, and future potential. What Is YOLO-World? YOLO-World is an advanced open-vocabulary object detection model designed to perform real-time detection of objects using natural language prompts. Unlike conventional object detection systems that can only recognize categories present in their training datasets, YOLO-World can identify unseen objects by understanding textual descriptions. This capability is known as open-vocabulary detection. For example, instead of being restricted to labels such as: Person Car Dog Bicycle YOLO-World can detect: Red electric scooter Construction worker wearing helmet Blue ceramic mug Drone with camera Golden retriever puppy This makes the model significantly more flexible and practical for real-world AI applications. Understanding Open-Vocabulary Object Detection Traditional object detectors rely on fixed label sets. These systems are trained using annotated datasets containing predefined classes. The challenge arises when new objects appear that were not part of training data. Open-vocabulary detection solves this issue by integrating language understanding into object detection systems. Instead of depending solely on predefined labels, the model can interpret human language descriptions and map them to visual features. This means the model can detect unseen categories dynamically using prompts. For example: “Find all laptops on the table” “Detect firefighters” “Locate orange traffic cones” The system understands both language and image content simultaneously. The Evolution of YOLO Models The YOLO family has evolved significantly over time. YOLOv1 Introduced the single-stage detection paradigm for real-time object detection. YOLOv2 and YOLOv3 Improved accuracy, anchor boxes, and multi-scale prediction. YOLOv4 and YOLOv5 Enhanced efficiency and deployment flexibility. YOLOv6, YOLOv7, and YOLOv8 Focused on speed optimization, edge AI deployment, and scalability. YOLO-World Introduced open-vocabulary detection by integrating vision-language capabilities into the YOLO framework. YOLO-World represents a major leap forward because it combines: Real-time inference Open-vocabulary recognition Vision-language alignment Efficient deployment How YOLO-World Works YOLO-World merges traditional object detection pipelines with language-aware embeddings. The system consists of several core components: 1. Image Encoder The image encoder extracts visual features from input images. It identifies patterns such as: Shapes Textures Colors Object boundaries These features are converted into numerical representations called embeddings. 2. Text Encoder The text encoder processes textual prompts. For example: “Cat” “Red sports car” “Airport luggage” The text descriptions are transformed into semantic embeddings. 3. Vision-Language Alignment The visual embeddings and text embeddings are aligned within a shared feature space. This allows the model to compare image regions with textual descriptions and determine matches. 4. Detection Head The detection head predicts: Bounding boxes Confidence scores Semantic similarity scores The model outputs object locations corresponding to text prompts. Key Features of YOLO-World Real-Time Performance YOLO-World maintains the high-speed inference capabilities of the YOLO family. This enables deployment in: Autonomous systems Smart cameras Robotics Edge AI devices Open-Vocabulary Recognition The model can detect unseen objects without retraining. Users simply provide new prompts. Zero-Shot Detection YOLO-World performs zero-shot learning by recognizing categories absent from training datasets. Flexible Deployment The model supports: Cloud environments Edge devices Embedded systems GPUs Industrial AI pipelines Language-Guided Detection Text prompts enable highly customized object detection. Examples include: “Damaged package” “People wearing masks” “Electric vehicles” YOLO-World Architecture Explained The architecture of YOLO-World is designed to balance speed and semantic understanding. Backbone Network The backbone extracts image features. Common backbones include: CSPDarknet EfficientNet Vision Transformers Neck Network The neck combines features from multiple scales. This improves detection for: Small objects Large objects Complex scenes Multi-Modal Fusion Layer This is the core innovation. The fusion layer integrates: Visual embeddings Text embeddings The model learns semantic relationships between language and visual regions. Detection Head The final stage predicts object localization and matching scores. Advantages of YOLO-World 1. Unlimited Object Categories Traditional models are limited by training labels. YOLO-World can recognize virtually any object described in text. 2. Reduced Retraining Costs Organizations no longer need to retrain models for every new category. This dramatically reduces: Annotation costs Training time Infrastructure expenses 3. Better Scalability YOLO-World scales efficiently for enterprise AI systems. 4. Enhanced User Interaction Users interact naturally using language prompts. 5. Improved Generalization The model generalizes better to unseen environments. YOLO-World vs Traditional YOLO Models Feature Traditional YOLO YOLO-World Fixed Categories Yes No Open-Vocabulary No Yes Text Prompt Support No Yes Zero-Shot Detection Limited Strong Real-Time Speed Excellent Excellent Language Understanding None Advanced YOLO-World vs CLIP-Based Detection Models YOLO-World is often compared with CLIP-powered systems. CLIP-Based Models CLIP excels at image-text understanding but often lacks real-time detection efficiency. YOLO-World Advantages YOLO-World provides: Faster inference Better localization Real-time object detection Edge deployment capabilities Applications of YOLO-World Autonomous Vehicles YOLO-World can identify unexpected road objects using text prompts. Examples include: Fallen tree branches Electric scooters Construction barriers Smart Surveillance Security systems can detect: Suspicious activities Safety violations Unauthorized objects Retail Analytics Retailers can track: Product categories Shelf inventory Customer behavior Robotics Robots can understand flexible commands such as: “Pick up the red bottle” “Find the toolbox” Healthcare Medical imaging systems can assist in

Introduction Data collection has become one of the most critical components of artificial intelligence, business intelligence, automation, and digital transformation. Organizations today rely heavily on accurate, scalable, and real-time data to train machine learning models, optimize operations, understand customer behavior, and make informed decisions. However, traditional data collection methods often involve significant manual effort, high operational costs, inconsistent quality, and long turnaround times. This is where Agent AI is changing the landscape. Agent AI, also known as Agentic AI, refers to intelligent systems capable of acting autonomously to complete tasks, make decisions, communicate with systems, and continuously improve workflows. Unlike traditional automation tools that follow static instructions, AI agents can analyze environments, understand goals, adapt to changing conditions, and collaborate with other agents or humans. When applied to data collection, Agent AI creates powerful opportunities for businesses across industries. AI agents can gather structured and unstructured data from multiple sources, validate information, organize datasets, monitor quality, automate labeling tasks, interact with APIs, scrape public information responsibly, conduct surveys, process multimedia content, and even coordinate crowdsourcing operations. From healthcare and retail to automotive, finance, agriculture, education, and smart cities, companies are adopting AI agents to improve efficiency, accelerate data pipelines, and reduce operational bottlenecks. In this comprehensive guide, we will explore how to use Agent AI for data collection, including: What Agent AI is Why Agent AI matters in modern data collection Core components of AI-driven data collection systems Step-by-step implementation process Best tools and technologies Industry use cases Challenges and ethical considerations Best practices for scalable deployment Future trends in agentic AI systems Whether you are a startup, enterprise, AI developer, researcher, or AI data solutions provider, this guide will help you understand how Agent AI can transform the way you collect and manage data. What is Agent AI? Agent AI refers to autonomous software systems designed to achieve goals with minimal human intervention. These systems can reason, plan, communicate, learn, and execute tasks dynamically. Unlike traditional rule-based automation, Agent AI systems are adaptive. They can: Analyze objectives Break tasks into smaller subtasks Interact with external systems Make decisions based on context Learn from outcomes Optimize workflows continuously An AI agent can operate independently or as part of a multi-agent ecosystem where several intelligent agents collaborate to achieve larger objectives. Core Characteristics of Agent AI 1. Autonomy AI agents can execute tasks without constant human supervision. 2. Goal-Oriented Behavior Agents work toward achieving defined objectives. 3. Context Awareness AI agents understand contextual information and adapt their actions accordingly. 4. Decision-Making Capability They evaluate options and select the best course of action. 5. Learning Ability Many AI agents improve over time using machine learning and reinforcement learning. 6. Communication AI agents can communicate with APIs, databases, cloud systems, and even humans. Understanding Data Collection in the AI Era Data collection involves gathering information from various sources for analysis, machine learning, reporting, or operational purposes. Modern organizations collect multiple types of data, including: Text data Audio recordings Video footage Images Sensor data LiDAR data Geospatial data Medical data Customer interactions Social media content Transactional data IoT device information The explosion of digital information has made manual collection methods increasingly inefficient. Challenges of Traditional Data Collection Traditional methods often face several limitations: Time Consumption Manual collection and annotation require extensive human labor. Scalability Issues Large-scale projects become difficult to manage. Data Quality Problems Human errors can reduce consistency. High Costs Enterprises spend significant budgets on workforce management. Delayed Insights Slow collection delays business decisions. Limited Real-Time Capability Manual systems cannot efficiently handle real-time streams. Agent AI addresses these limitations by introducing intelligent automation into every stage of the data lifecycle. Why Use Agent AI for Data Collection? Agent AI provides transformative benefits for modern enterprises. 1. Automation at Scale AI agents can process massive amounts of data simultaneously across multiple platforms. For example: Scraping websites Monitoring sensors Collecting IoT streams Organizing cloud storage Extracting structured information from documents 2. Faster Data Pipelines Agent AI dramatically reduces data collection time. Tasks that previously took weeks can now be completed in hours. 3. Improved Data Accuracy AI agents use validation rules, anomaly detection, and quality checks to improve consistency. 4. Real-Time Data Collection AI agents can continuously monitor live systems and instantly collect incoming information. This is especially valuable for: Financial trading Smart cities Autonomous vehicles Healthcare monitoring Cybersecurity systems 5. Reduced Operational Costs Organizations can reduce manual labor costs while improving efficiency. 6. Intelligent Decision-Making AI agents can decide which data sources are relevant and prioritize high-value information. 7. Multi-Source Integration Agents can combine data from: APIs Databases Sensors Web applications Cloud systems Mobile apps Enterprise platforms How Agent AI Works in Data Collection Agent AI systems follow an intelligent workflow. Step 1: Define Objectives The organization defines goals such as: Collect customer reviews Monitor traffic data Gather medical images Build training datasets Analyze user behavior Step 2: Task Planning The AI agent breaks the objective into smaller tasks. For example: Identify sources Access databases Extract data Clean records Validate quality Store results Step 3: Source Identification The agent identifies appropriate data sources. These may include: Public websites APIs Enterprise databases IoT devices Cloud systems Video feeds Annotation platforms Step 4: Data Extraction The agent gathers information automatically. Methods include: API integration Web scraping Sensor communication OCR extraction Speech recognition Video processing Step 5: Data Cleaning The AI agent removes: Duplicates Corrupted records Missing values Invalid formats Step 6: Data Validation Agents verify quality using: Statistical analysis Pattern recognition Rule-based checks Human review workflows Step 7: Storage and Organization Collected data is organized into: Databases Cloud storage Data lakes AI training repositories Step 8: Continuous Learning AI agents analyze performance and improve future collection strategies. Types of Agent AI Used for Data Collection 1. Web Scraping Agents These agents gather information from websites. Use cases include: Market research Price monitoring Competitor analysis News aggregation 2. Conversational AI Agents Chatbots and voice assistants collect customer information. Examples: Customer support interactions Survey automation User feedback

Introduction Object detection has come a long way—from early R-CNN architectures to real-time, production-grade models capable of running on edge devices and cloud infrastructures simultaneously. In 2026, YOLO26 represents the cutting edge of this evolution, bringing unmatched speed, accuracy, and scalability. At the same time, cloud-based machine learning platforms have matured. Among them, Azure Machine Learning (AzureML) stands out as a powerful ecosystem for building, training, deploying, and monitoring AI models at scale. This blog explores how YOLO26 and AzureML together create a robust, enterprise-grade object detection pipeline, covering everything from fundamentals to advanced deployment strategies. 1. Understanding YOLO26 1.1 What is YOLO26? YOLO (You Only Look Once) has always been about real-time detection. YOLO26 builds on previous versions with: Transformer-enhanced backbone Multi-scale detection heads Efficient attention mechanisms Improved small-object detection Native support for edge + cloud hybrid deployment YOLO26 is not just an incremental improvement—it is designed for production-first AI systems. 1.2 Key Features of YOLO26 ⚡ Ultra-Fast Inference YOLO26 achieves near real-time inference even on large datasets and high-resolution inputs. 🎯 High Accuracy Improved bounding box regression and classification heads increase mAP scores significantly. 🧠 Hybrid Architecture Combines CNNs with lightweight transformers for better contextual understanding. 📦 Modular Design Allows integration with: Custom datasets Cloud pipelines Edge devices 1.3 YOLO26 vs Previous Versions Feature YOLOv8 YOLOv12 YOLO26 Speed Fast Faster Fastest Accuracy High Very High State-of-the-art Transformer Integration ❌ Partial ✅ Cloud Optimization Limited Moderate Full 2. Introduction to Azure Machine Learning (AzureML) 2.1 What is AzureML? AzureML is a cloud-based platform that enables: Model training Experiment tracking Dataset management Deployment pipelines Monitoring and governance 2.2 Why Use AzureML for YOLO26? Scalability Train YOLO26 on: Single GPU Multi-node clusters Distributed environments MLOps Integration CI/CD pipelines Version control Experiment tracking Managed Infrastructure No need to manually configure: GPUs Networking Storage 3. Setting Up YOLO26 on AzureML 3.1 Prerequisites Before starting, ensure you have: Azure subscription AzureML workspace Python environment (3.9+) GPU-enabled compute instance 3.2 Creating AzureML Workspace Steps: Go to Azure Portal Create resource → Machine Learning Configure: Resource group Region Workspace name 3.3 Setting Up Compute AzureML provides: CPU clusters GPU clusters (recommended for YOLO26) Compute instances for development Recommended: Standard_NC or ND series GPUs 3.4 Installing YOLO26 Environment pip install yolo26 pip install azure-ai-ml pip install torch torchvision 4. Data Preparation for YOLO26 4.1 Dataset Structure YOLO26 uses standard format: dataset/ ├── images/ │ ├── train/ │ ├── val/ ├── labels/ │ ├── train/ │ ├── val/ 4.2 Annotation Format Each label file: class_id x_center y_center width height 4.3 Uploading Data to AzureML from azure.ai.ml import MLClient from azure.identity import DefaultAzureCredential ml_client = MLClient(DefaultAzureCredential(), subscription_id, resource_group, workspace) data = ml_client.data.create_or_update(…) 5. Training YOLO26 on AzureML 5.1 Training Script from yolo26 import YOLO model = YOLO("yolo26.pt") model.train( data="data.yaml", epochs=100, imgsz=640, batch=16 ) 5.2 Running Training on AzureML Use job submission: from azure.ai.ml import command job = command( code="./src", command="python train.py", environment="yolo26-env", compute="gpu-cluster" ) ml_client.jobs.create_or_update(job) 5.3 Distributed Training AzureML supports multi-node training: Data parallelism Model parallelism YOLO26 benefits from distributed GPU scaling. 6. Hyperparameter Tuning 6.1 Key Parameters Learning rate Batch size Image size Augmentation strategies 6.2 AzureML Hyperparameter Sweep from azure.ai.ml.sweep import Choice sweep_job = command( … sweep=dict( sampling_algorithm="random", objective=dict(goal="maximize", primary_metric="mAP"), search_space={ "lr": Choice([0.001, 0.01]), } ) ) 7. Model Evaluation 7.1 Metrics mAP (mean Average Precision) Precision / Recall F1 Score 7.2 Visualization Confusion matrix Bounding box predictions Error analysis 8. Deploying YOLO26 on AzureML 8.1 Deployment Options Real-Time Endpoints Low latency API-based inference Batch Endpoints Large-scale processing 8.2 Deployment Code from azure.ai.ml.entities import ManagedOnlineEndpoint endpoint = ManagedOnlineEndpoint( name="yolo26-endpoint" ) ml_client.begin_create_or_update(endpoint) 8.3 Inference Script def run(data): results = model(data) return results 9. MLOps for YOLO26 9.1 Versioning Track: Datasets Models Experiments 9.2 CI/CD Pipelines Use: GitHub Actions Azure DevOps 9.3 Monitoring Monitor: Drift Latency Accuracy 10. Performance Optimization 10.1 Techniques Model pruning Quantization Mixed precision training 10.2 GPU Optimization Use TensorRT Optimize batch size 11. Real-World Use Cases 11.1 Autonomous Vehicles Real-time object detection Lane tracking 11.2 Retail Analytics Customer behavior analysis Shelf monitoring 11.3 Healthcare Medical imaging detection 11.4 Smart Cities Traffic management Surveillance systems 12. Edge + Cloud Integration YOLO26 supports: Edge inference (IoT devices) Cloud retraining (AzureML) 13. Security and Compliance AzureML provides: Role-based access control Data encryption Compliance certifications 14. Cost Optimization Tips: Use spot instances Auto-scale clusters Optimize training epochs 15. Challenges and Solutions Challenge Solution Large dataset Use Azure Blob Storage Training cost Distributed training Model drift Continuous monitoring 16. Future of YOLO + AzureML Trends: Fully automated pipelines Self-improving models Integration with generative AI Edge-first architectures Conclusion YOLO26 combined with AzureML creates a powerful, scalable, and production-ready computer vision ecosystem. Whether you’re building: Real-time applications Enterprise AI pipelines Edge-cloud hybrid systems This combination gives you the flexibility, performance, and reliability needed in 2026 and beyond. Frequently Asked Questions (FAQ) About YOLO26 on AzureML 1. What is YOLO26? YOLO26 is a next-generation object detection model designed for ultra-fast and highly accurate real-time computer vision applications. It improves upon earlier YOLO versions with enhanced transformer-based architecture, better small-object detection, and optimized cloud deployment capabilities. 2. Why should I use AzureML for YOLO26? Azure Machine Learning provides: Scalable GPU infrastructure Automated MLOps pipelines Experiment tracking Distributed training Easy deployment endpoints Enterprise-grade security This makes it ideal for training and deploying large-scale YOLO26 models. 3. Can YOLO26 run in real time on Azure? Yes. YOLO26 is optimized for low-latency inference and can run in real time using: Azure GPU VMs Managed online endpoints Edge devices connected to Azure IoT Many deployments achieve inference speeds below 20 milliseconds depending on hardware configuration. 4. What GPU is recommended for YOLO26 training on AzureML? Recommended GPU options include: NVIDIA A100 NVIDIA V100 NVIDIA H100 Azure ND-series instances For enterprise-scale training, multi-GPU distributed clusters provide the best performance. 5. Is YOLO26 suitable for edge AI applications? Absolutely. YOLO26 supports: Edge inference Quantization TensorRT optimization ONNX export This allows deployment on: Drones Smart cameras Autonomous robots IoT devices 6. How much does it cost to train YOLO26 on

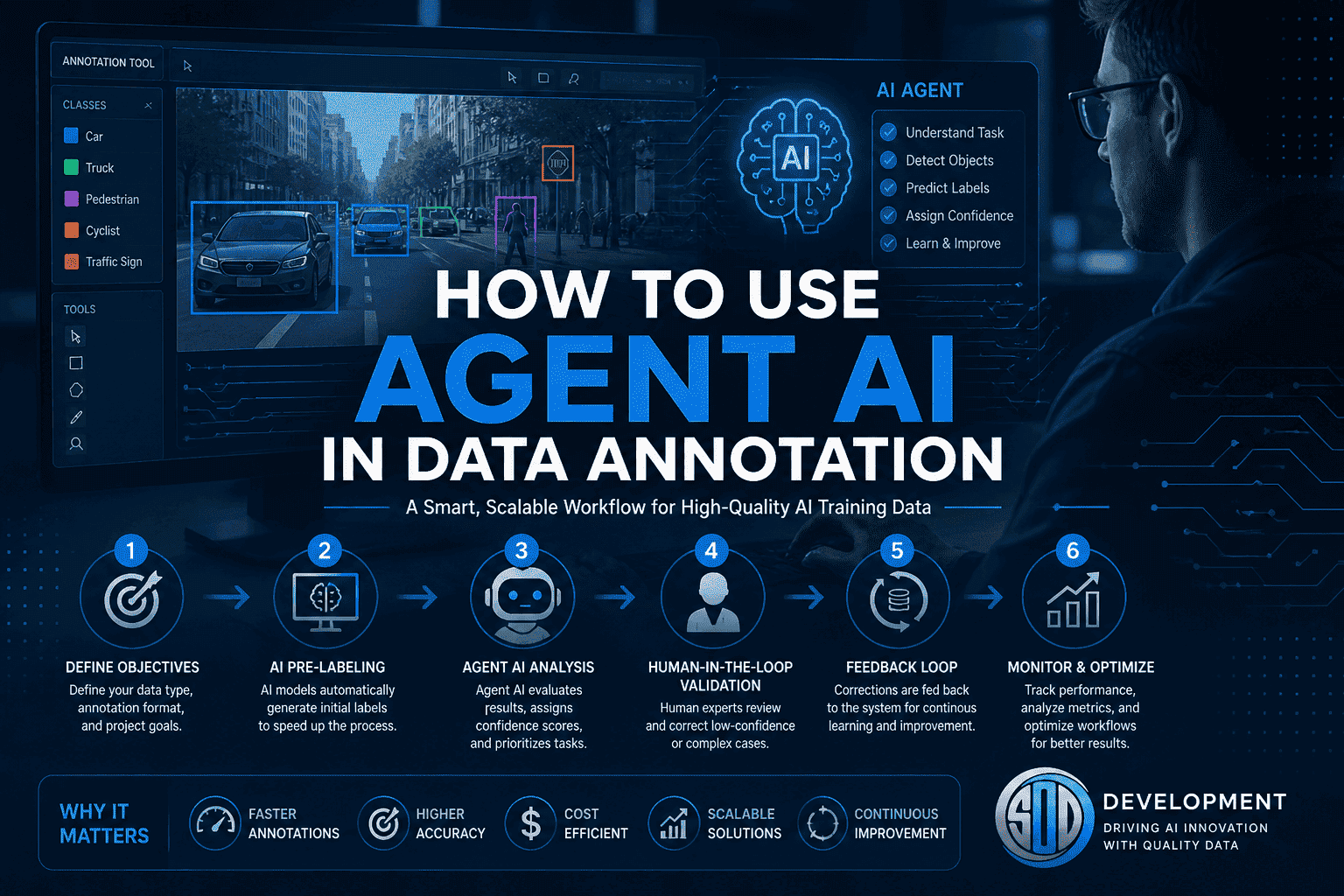

Introduction Data annotation has long been the backbone of artificial intelligence. Whether you’re building computer vision systems, training large language models, or developing autonomous vehicles, high-quality labeled data is non-negotiable. But traditional annotation methods—manual labeling, rigid workflows, and heavy human dependency—are no longer sufficient to meet today’s scale and complexity. Enter Agent AI. Agent AI is transforming how data annotation is performed by introducing autonomous, semi-autonomous, and collaborative AI systems that can plan, reason, and execute annotation tasks with minimal human intervention. Instead of simply labeling data, AI agents can now understand context, make decisions, and continuously improve. This blog explores how to use Agent AI in data annotation, including architecture, workflows, tools, benefits, challenges, and real-world use cases. What is Agent AI? Agent AI refers to intelligent systems designed to perform tasks autonomously by: Perceiving data (images, text, audio, video) Making decisions based on context Executing actions (labeling, validating, correcting) Learning from feedback Unlike traditional machine learning models, Agent AI systems are: Goal-oriented Context-aware Capable of multi-step reasoning Interactive with humans and other agents These agents are often powered by large language models (LLMs), computer vision models, and reinforcement learning. Why Agent AI Matters in Data Annotation Traditional annotation challenges include: High cost and time consumption Human inconsistency and bias Difficulty scaling to millions of data points Complex multi-modal data handling Agent AI solves these by: Automating repetitive tasks Improving labeling consistency Reducing turnaround time Enabling dynamic and adaptive workflows Core Components of Agent AI Annotation Systems To effectively use Agent AI in data annotation, you need to understand its architecture: 1. Perception Layer This includes models that process raw data: Computer vision models (for images/videos) Speech recognition (for audio) NLP models (for text) 2. Reasoning Engine This is where the “agent” becomes intelligent: LLM-based reasoning (e.g., task interpretation) Rule-based systems Context-aware decision-making 3. Action Module Executes annotation tasks: Bounding boxes Semantic segmentation Text classification Named entity recognition (NER) 4. Memory and Feedback Loop Stores previous annotations Learns from corrections Improves over time 5. Human-in-the-Loop Interface Humans validate edge cases Provide feedback Handle ambiguity How to Use Agent AI in Data Annotation (Step-by-Step) Step 1: Define Annotation Objectives Start by clearly defining: Type of data (image, text, audio, video) Annotation format (bounding boxes, polygons, tags, transcripts) Quality requirements (accuracy thresholds) Example: Annotating medical images for tumor detection Labeling customer sentiment in chat data Step 2: Select the Right AI Models Choose models based on your data: Computer Vision → YOLO, SAM, Detectron NLP → Transformer-based models (LLMs) Audio → Whisper-like models These models act as the foundation for your agent system. Step 3: Design the Agent Workflow Instead of a linear pipeline, Agent AI uses dynamic workflows: Example Workflow: Agent reads task instructions Pre-labeling model generates initial annotations Agent evaluates confidence score If confidence is high → accept If low → send to human reviewer Agent learns from corrections Step 4: Implement Multi-Agent Collaboration You can use multiple agents for different roles: Annotation Agent → Labels data Validation Agent → Checks quality Correction Agent → Fixes errors Supervisor Agent → Manages workflow This modular approach improves scalability and accuracy. Step 5: Integrate Human-in-the-Loop Even the best agents need human oversight. Use humans for: Edge cases Ambiguous data Quality audits Best practice: Only escalate low-confidence cases to humans Continuously retrain agents using human feedback Step 6: Build Feedback and Learning Loops Agent AI systems improve over time through: Reinforcement learning Active learning Continuous fine-tuning Example:If a human corrects a bounding box, the agent stores this correction and updates its future predictions. Step 7: Monitor and Optimize Performance Track key metrics: Annotation accuracy Speed (labels/hour) Cost per annotation Human intervention rate Use dashboards and analytics to continuously refine your system. Real-World Use Cases 1. Autonomous Driving Annotating LiDAR and video data Agents handle object detection and tracking Humans validate rare scenarios 2. Healthcare AI Labeling medical images Extracting clinical entities from text Ensuring compliance and precision 3. E-commerce Product categorization Image tagging Customer sentiment analysis 4. Conversational AI Intent classification Entity extraction Dialogue annotation Tools and Platforms for Agent AI Annotation Popular tools include: CVAT Labelbox Supervisely Roboflow These platforms can be extended with Agent AI capabilities using APIs and LLM integrations. Benefits of Using Agent AI in Annotation 1. Scalability Handle millions of data points efficiently. 2. Cost Reduction Reduce reliance on large annotation teams. 3. Speed Accelerate project timelines significantly. 4. Consistency Minimize human variability. 5. Continuous Improvement Agents learn and improve with time. Challenges and Limitations Despite its advantages, Agent AI comes with challenges: 1. Initial Setup Complexity Designing agent workflows requires expertise. 2. Model Bias Agents may inherit biases from training data. 3. Quality Control Over-reliance on automation can reduce accuracy if not monitored. 4. Data Privacy Sensitive data requires strict governance. Best Practices To successfully implement Agent AI: Start with pilot projects Use hybrid human-AI workflows Focus on high-impact use cases first Continuously evaluate performance Invest in training and infrastructure Future of Agent AI in Data Annotation The future is moving toward: Fully autonomous annotation systems Multi-modal agents handling text, image, and video together Self-improving pipelines with minimal human intervention Integration with real-time AI systems Agent AI will not replace humans—but will augment human capabilities, making annotation faster, smarter, and more scalable. How SO Development Can Help At SO Development, we specialize in advanced AI data solutions, including: Agent AI-powered annotation workflows Large-scale data collection and labeling Multi-modal annotation (LiDAR, image, text, audio) Custom AI pipeline development With over 600+ projects and expert annotators, we combine human expertise with intelligent automation to deliver high-quality datasets for your AI models. Conclusion Agent AI is redefining data annotation by introducing intelligence, autonomy, and adaptability into the process. By combining machine efficiency with human judgment, organizations can achieve faster, cheaper, and more accurate annotation at scale. If you’re looking to stay competitive in the AI space, adopting Agent AI in your annotation workflow is no longer optional—it’s essential. Frequently Asked Questions (FAQ) 1. What is Agent AI in

Introduction Artificial intelligence is no longer just about generating text or recognizing images. The real shift happening in 2026 is the rise of AI agents—systems that don’t just respond, but act. These agents can plan tasks, use tools, interact with software, and execute workflows with minimal human input. In other words, they are becoming digital workers. This blog explores: What AI agents actually are (beyond the hype) Why they matter now Where they are being used And the top 10 companies building AI agents today What Is Agent AI? The term “AI agent” is often overused, so let’s clarify it properly. An AI agent is a system that can: Understand a goal Break it into steps Decide what actions to take Execute those actions using tools or software Adapt based on results Unlike traditional AI models (which are reactive), agents are goal-driven and proactive. A Simple Example If you ask a chatbot: “Summarize this report” → it gives you text If you ask an AI agent: “Analyze this report, identify risks, create a presentation, and email it to my team” It can actually: Read the document Extract insights Generate slides Send the email That difference—from answering to doing—is what defines agent AI. Why AI Agents Matter in 2026 Three major shifts are driving adoption: 1. Labor Automation Is Moving Up the Stack We are no longer automating repetitive tasks only—AI agents are now handling: Research Analysis Decision support End-to-end workflows 2. LLMs Became Capable Enough Modern models can: Reason across steps Use tools via APIs Maintain context This made agents practical—not just experimental. 3. Enterprises Need Efficiency Companies are under pressure to: Reduce costs Increase output Operate 24/7 AI agents solve all three. Key Capabilities of Modern AI Agents The best AI agents today share a common architecture: Planning They decompose complex goals into executable steps. Tool Use They interact with: APIs CRMs databases web browsers Memory They store context across sessions, improving consistency. Autonomy They operate with minimal supervision. Multi-Agent Collaboration Advanced systems use multiple agents working together, each specialized in a task. Top 10 AI Agent Companies in 2026 SO Development – The Data-Driven Leader in AI Agents Most companies in this space focus heavily on models and frameworks.SO Development takes a different—and more practical—approach: they start with the data. That matters more than most people realize. Why This Matters AI agents fail in production not because of bad models, but because of: Poor training data Lack of domain specificity Weak evaluation pipelines SO Development addresses this at the foundation. What They Do Well Build custom AI agents tailored to real business workflows Provide end-to-end pipelines: Data collection Annotation Model training Deployment Support multiple AI domains: NLP Computer vision Multimodal systems LiDAR and 3D data Where They Stand Out Their agents are not generic—they are: Domain-trained Production-ready Optimized for accuracy and scale This makes them particularly strong for: Enterprises AI-heavy products Complex automation environments OpenAI — The Foundation Model Powerhouse OpenAI plays a central role in the AI agent ecosystem. They don’t just build agents—they build the models that power them. Strengths Advanced reasoning models Strong developer ecosystem Rapid innovation cycles Limitation They provide the “brain,” but companies still need partners to: Customize integrate deploy agents in real workflows Cognition Labs — Autonomous Software Engineering Cognition became widely known for building an AI agent capable of: Writing code Debugging Running development workflows Why It Matters This is one of the first real examples of end-to-end autonomous work in software engineering. Adept AI — Human-Like Software Interaction Adept focuses on agents that can: Use tools like humans Navigate interfaces Execute tasks across applications This approach avoids heavy integrations and instead mimics real user behavior. Teammates.ai — Digital Employees Teammates.ai positions its agents as: “AI teammates” They offer pre-built agents for: Sales Recruitment customer support Strong focus on plug-and-play business automation. Lindy.ai — No-Code Agent Builder Lindy.ai lowers the barrier to entry. Users can build agents without coding, making it ideal for: startups operations teams non-technical users H Company — Autonomous Computer Control H Company is working on agents that: Control computers directly Perform actions like clicking, typing, navigating This is critical for environments where APIs are limited. MarcelHeap — Custom AI for Businesses MarcelHeap focuses on: tailored AI agent solutions industry-specific implementations Best suited for companies that need custom builds without building in-house teams. Binar Code — Agile AI Deployment BinarCode emphasizes: fast implementation adaptable architectures They are strong in rapidly evolving environments. Parallel Web Systems — The Backend Layer Parallel builds infrastructure that allows agents to: run long tasks access web environments execute complex workflows They focus on the “operating system” for AI agents. Where AI Agents Are Already Delivering Value Customer Support Agents handle entire conversations and resolve issues without escalation. Operations They automate internal workflows across tools and departments. Finance Used for: fraud detection reporting analysis Software Development Agents now assist with: coding testing debugging Data Processing They clean, analyze, and structure large datasets autonomously. Benefits (and Reality Check) What AI Agents Do Well Reduce manual work Speed up execution Operate continuously Scale easily Where They Still Struggle Ambiguous tasks Poor data environments Complex edge cases This is exactly why data-centric companies outperform model-centric ones in real deployments. How to Choose the Right AI Agent Company If you’re evaluating vendors, focus on: 1. Data Strategy Do they handle training data properly? 2. Customization Can they adapt to your workflows? 3. Integration Will the agent work with your systems? 4. Reliability Is it production-ready—or just a demo? 5. Scalability Can it grow with your business? Final Thoughts AI agents are not a future concept anymore—they are already reshaping how work gets done. But there’s a clear divide in the market: Some companies build impressive demos Others build systems that actually work in production That’s where SO Development stands out. By focusing on: data quality real-world deployment domain-specific training They deliver AI agents that don’t just look good—but perform reliably at scale. Frequently Asked Questions (FAQ) 1. What

Introduction Artificial Intelligence has rapidly evolved over the past decade. Initially, most systems were designed as single-agent models, where one AI handled a specific task—classification, prediction, or automation. But real-world problems are rarely that simple. Modern challenges—like global logistics, autonomous driving, financial markets, and climate systems—require multiple decision-makers operating simultaneously. This is where multi-agent systems (MAS) come in. Rather than relying on a single “super-intelligence,” MAS distributes intelligence across multiple autonomous agents that interact, collaborate, and adapt in real time. This shift represents one of the most important transformations in AI: From isolated intelligence → to collaborative intelligence. What Are Multi-Agent Systems? A multi-agent system is a collection of independent computational entities—called agents—that operate within a shared environment. Each agent: Has its own goals or objectives Perceives the environment Makes decisions independently Interacts with other agents These agents can: Cooperate Compete Coexist with partial alignment The overall system behavior emerges from these interactions, often producing outcomes more sophisticated than any single agent could achieve. The Core Concept: Emergence One of the defining features of MAS is emergent behavior. This means: The system exhibits intelligence at a higher level than individual agents Complex patterns arise from simple rules Examples: Ant colonies organizing without central control Traffic flow optimization through decentralized signals Market dynamics driven by independent traders In AI, emergence allows systems to: Solve problems dynamically Adapt without centralized oversight Scale efficiently Key Components of Multi-Agent Systems 1. Agents Agents are the building blocks of MAS. They can vary widely in complexity: Types of Agents: Reactive agents – respond to stimuli without memory Deliberative agents – plan actions based on internal models Learning agents – improve over time using data Hybrid agents – combine multiple approaches Each agent typically includes: Sensors (input) Actuators (output) Decision-making logic Knowledge base 2. Environment The environment is where agents operate. Types of Environments: Physical (robots, drones) Digital (software systems, simulations) Hybrid (IoT systems combining both) Environment properties: Static vs dynamic Deterministic vs stochastic Fully observable vs partially observable 3. Communication Agents must exchange information to function effectively. Communication Methods: Message passing Shared memory APIs Event-driven systems Protocols: Structured languages (ACL – Agent Communication Language) Negotiation protocols Auction mechanisms 4. Coordination Mechanisms Coordination ensures agents work efficiently together. Common approaches: Task allocation Consensus algorithms Market-based coordination Rule-based systems 5. Decision-Making Models Agents use various strategies: Rule-based systems Optimization algorithms Machine learning models Reinforcement learning Types of Multi-Agent Systems 1. Cooperative Systems Agents share a common goal. Example: Warehouse robots working together to fulfill orders. Key Features: Shared rewards High communication Strong coordination 2. Competitive Systems Agents have conflicting objectives. Example: Algorithmic trading bots competing in financial markets. Key Features: Strategic behavior Game theory Limited information sharing 3. Mixed Systems Most real-world systems fall into this category. Example: Ride-sharing platforms: Drivers cooperate with the system Compete with each other 4. Hierarchical Systems Agents are organized in layers. Structure: High-level agents (decision-makers) Low-level agents (executors) 5. Swarm Intelligence Systems Inspired by nature (ants, bees, birds). Characteristics: Simple agents No central control Emergent coordination Architectures of Multi-Agent Systems Centralized vs Decentralized Centralized: One controller coordinates agents Easier to manage Less scalable Decentralized: No central authority Agents act independently Highly scalable and robust Distributed Architecture Agents are distributed across networks. Benefits: Fault tolerance Parallel processing Geographic scalability Hybrid Architecture Combines centralized and decentralized approaches. Algorithms Used in Multi-Agent Systems 1. Game Theory Used in competitive environments. Concepts: Nash equilibrium Zero-sum games Strategy optimization 2. Reinforcement Learning (Multi-Agent RL) Agents learn through interaction. Types: Cooperative RL Competitive RL Self-play 3. Consensus Algorithms Used for agreement among agents. Examples: Voting mechanisms Distributed consensus 4. Auction Algorithms Agents bid for tasks or resources. Applications: Logistics Cloud computing 5. Evolutionary Algorithms Agents evolve strategies over time. Real-World Applications 1. Autonomous Vehicles Cars act as agents: Communicate with each other Share traffic data Prevent accidents Future: Fully coordinated traffic ecosystems 2. Smart Cities Agents manage: Traffic lights Energy consumption Waste systems 3. Healthcare Systems Applications: Patient monitoring agents Diagnostic assistants Resource allocation 4. Finance and Trading Agents: Analyze market data Execute trades Manage risk 5. Supply Chain and Logistics Agents represent: Suppliers Warehouses Delivery routes Outcome: Optimized delivery Reduced costs 6. Robotics and Swarms Examples: Drone fleets Agricultural robots Disaster response 7. Gaming and Simulation NPCs behave independently, creating realistic worlds. 8. Cybersecurity Agents: Detect threats Respond autonomously Adapt to new attacks Challenges of Multi-Agent Systems 1. Coordination Complexity As agents increase, interactions grow exponentially. 2. Communication Overhead Too much messaging slows performance. 3. Conflict Resolution Agents may: Compete for resources Have conflicting goals 4. Security Risks Distributed systems are vulnerable to: Attacks Data breaches 5. Debugging and Testing Hard to trace: Emergent behavior System-wide bugs 6. Ethical Concerns Questions arise: Who is responsible for decisions? How to ensure fairness? Multi-Agent Systems vs Single-Agent AI Key Differences Aspect Single-Agent Multi-Agent Intelligence Centralized Distributed Complexity Lower Higher Scalability Limited High Flexibility Moderate High Resilience Low High Multi-Agent Systems + Large Language Models A major breakthrough is combining MAS with advanced AI models. Example: Each agent: Has a specialized role Uses language models to communicate Use Cases: AI research assistants Automated business workflows Coding agents collaborating Conclusion Agentic AI represents a fundamental evolution in artificial intelligence — shifting from tools that respond to prompts toward systems that pursue goals. The transformation happens through architecture, not magic. By applying five key design patterns: Planner–Executor Tool Use Memory Augmentation Reflection Multi-Agent Collaboration developers can turn LLMs into reliable, capable AI agents. The future of AI isn’t just smarter models — it’s smarter systems. FAQ What is Agentic AI in simple terms? Agentic AI refers to AI systems that can independently plan and execute tasks to achieve goals rather than only responding to prompts. How is Agentic AI different from chatbots? Chatbots generate responses. Agentic AI systems take actions, use tools, remember context, and iteratively work toward outcomes. Do AI agents replace humans? No. Most agentic systems are designed to augment human workflows by automating repetitive or complex tasks

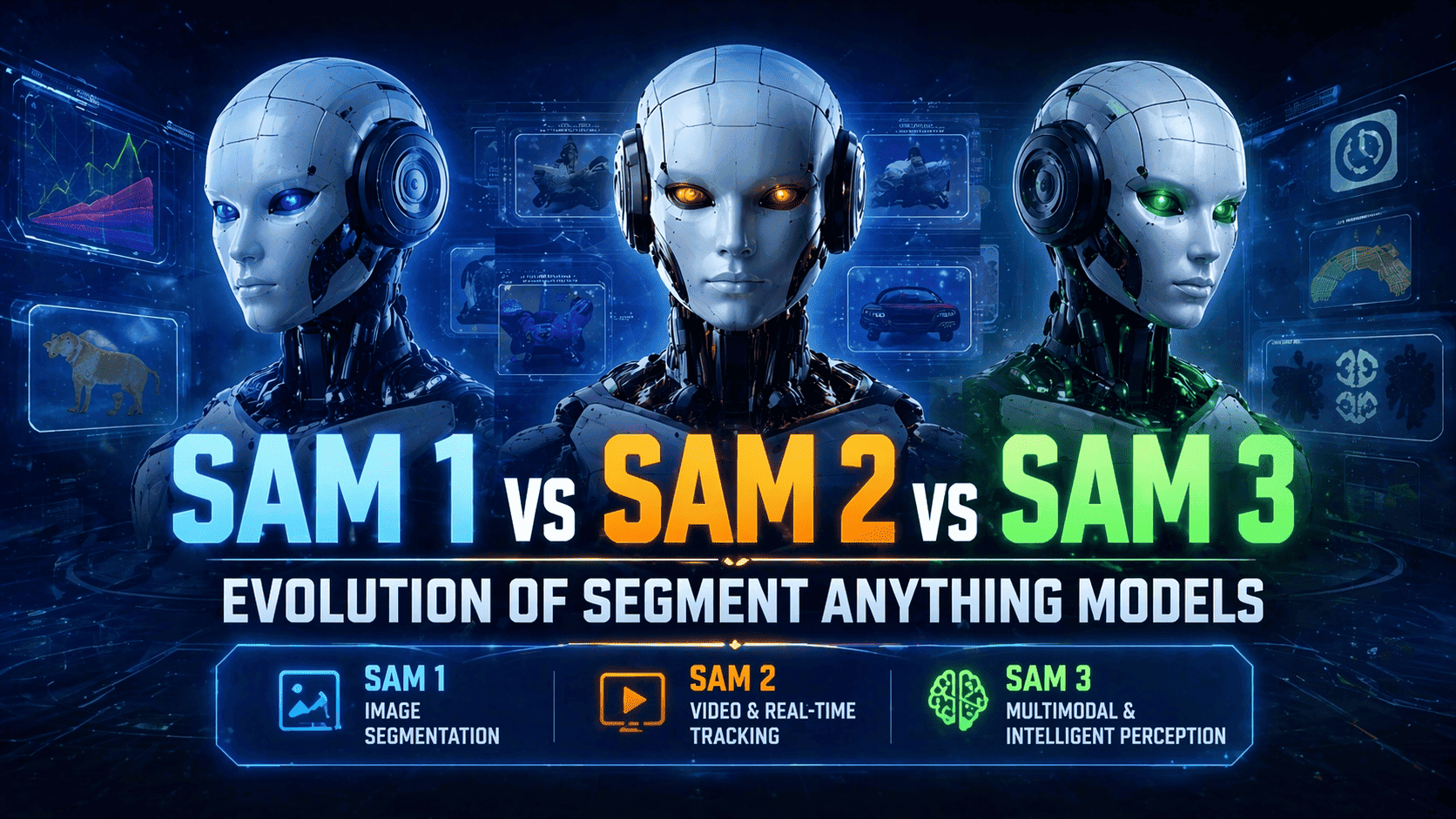

Introduction When Meta introduced the Segment Anything Model (SAM), it didn’t just release another AI model—it redefined how we think about image segmentation. Before SAM, segmentation models were: Task-specific Data-hungry Hard to generalize SAM flipped that paradigm by introducing a foundation model for vision—a system capable of segmenting virtually anything with minimal input. Since then, the evolution from SAM 1 → SAM 2 → SAM 3 has followed a clear trajectory: Static → Dynamic Manual → Assisted Reactive → Context-aware This blog dives deep into each version, not just at a surface level—but across architecture, capabilities, limitations, and real-world impact. What Is the Segment Anything Model (SAM)? At its core, SAM is a promptable segmentation system. Instead of asking: “Can this model segment cats?” You ask: “Given this prompt, what object do you want?” Supported Prompts Points (foreground/background) Bounding boxes Masks (Emerging) natural language This flexibility is what makes SAM so powerful—it turns segmentation into an interactive and general-purpose tool. SAM 1: The Breakthrough (2023) SAM 1 laid the foundation for everything that followed. Core Idea A universal segmentation model trained on an unprecedented dataset (SA-1B). Architecture Overview SAM 1 consists of three main components: Image encoder (Vision Transformer-based) Prompt encoder Mask decoder This modular design allows the model to: Understand the image globally Adapt to user input dynamically Generate precise segmentation masks Key Features 1. Massive Training Dataset Over 1 billion masks Diverse domains: Natural images Indoor scenes Complex object boundaries 2. Zero-Shot Generalization SAM 1 works across: Medical scans Satellite imagery Industrial datasets …without retraining. 3. Prompt Flexibility Users can guide segmentation with minimal effort: Click a point → get object Draw a box → isolate region Strengths Extremely versatile High-quality segmentation Works out-of-the-box Ideal for annotation pipelines Weaknesses No temporal awareness Requires manual interaction Not optimized for real-time systems Limited contextual reasoning Real-World Applications Data labeling platforms Medical imaging annotation Creative tools (e.g., background removal) Preprocessing for machine learning pipelines 👉 Key Insight:SAM 1 is a tool for humans, not an autonomous system. SAM 2: From Images to Streaming Intelligence (2024) SAM 2 represents a massive leap forward. Instead of treating images independently, SAM 2 introduces:👉 continuous visual understanding Core Innovation: Temporal Memory SAM 2 doesn’t just see—it remembers. What This Enables: Object tracking across frames Consistent segmentation in video Reduced need for repeated prompts Architectural Evolution SAM 2 extends SAM 1 by adding: Streaming memory modules Frame-to-frame feature propagation Real-time inference optimizations This transforms the model into something closer to a perception engine rather than a static tool. Key Features 1. Video Segmentation Works across entire sequences Maintains object identity 2. Real-Time Interaction Near live processing Suitable for camera feeds 3. Persistent Object Tracking Once selected, objects stay tracked Handles occlusion better Strengths Excellent for video workflows Reduces manual input More scalable for real-world systems Enables interactive AI applications Weaknesses Computationally heavier Still relies on prompts Tracking drift in long videos Limited semantic understanding Real-World Applications Video editing tools Autonomous driving perception Surveillance and monitoring Sports analytics 👉 Key Insight:SAM 2 shifts from interaction → continuity. SAM 3: Toward General Visual Intelligence (2025–2026) Unlike SAM 1 and SAM 2, SAM 3 is less of a single release and more of an evolutionary direction. It represents the convergence of: Computer vision Language models Reasoning systems Core Idea 👉 Segmentation becomes context-aware and autonomous Key Innovations (Emerging) 1. Multimodal Prompts Instead of clicks, you can say: “Segment all broken objects” “Highlight the main subject” This blends segmentation with natural language understanding. 2. Semantic Awareness SAM 3 doesn’t just segment shapes—it understands: Object roles Scene context Relationships 3. Reduced Human Input Automatic object discovery Prioritization of important regions Smart defaults 4. Integration with AI Agents SAM 3 can act as the “eyes” of: Robotics systems Autonomous agents AR/VR environments 5. 3D & Spatial Understanding Future SAM systems are expected to: Segment across multiple views Build spatial maps Work in immersive environments Strengths (Projected) Context-driven segmentation Cross-modal reasoning Scalable to complex environments Minimal supervision required Limitations (Current State) Still evolving rapidly Not standardized Trade-offs in performance vs intelligence Requires integration with larger AI systems Real-World Applications Robotics and automation AI copilots with vision Smart surveillance Mixed reality systems 👉 Key Insight:SAM 3 moves from seeing → understanding. Deep Technical Comparison 1. Interaction Model Version Interaction Style SAM 1 Manual prompts SAM 2 Prompt + tracking SAM 3 Natural language + autonomous 2. Temporal Capabilities Version Temporal Awareness SAM 1 None SAM 2 Frame memory SAM 3 Contextual memory 3. Intelligence Layer Version Intelligence Level SAM 1 Reactive SAM 2 Persistent SAM 3 Context-aware 4. Deployment Readiness Version Deployment SAM 1 Mature SAM 2 Production-ready (select use cases) SAM 3 Experimental / emerging SAM vs Traditional Segmentation Models Before SAM, models like: Mask R-CNN U-Net required: Task-specific training Labeled datasets Fine-tuning SAM eliminates much of that by: Generalizing across domains Reducing labeling effort Enabling interactive workflows 👉 This is why SAM is often considered a foundation model for vision, similar to how large language models transformed NLP. Practical Guidance: Which One Should You Use? Use SAM 1 if: You need high-quality image segmentation You’re building annotation tools You want stability and simplicity Use SAM 2 if: You work with video or live feeds You need object tracking You want interactive real-time systems Watch SAM 3 if: You’re building next-gen AI products You need multimodal intelligence You’re working in robotics, AR, or agents The Bigger Picture: Where This Is All Going The evolution of SAM reflects a broader shift in AI: Phase 1: Tools Assist humans Require input Limited context Phase 2: Systems Handle continuous data Reduce manual effort Improve efficiency Phase 3: Intelligence Understand context Act autonomously Integrate across modalities Final Thoughts The journey from SAM 1 to SAM 3 is not just an upgrade cycle—it’s a transformation in how machines perceive the world. SAM 1: A powerful segmentation tool SAM 2: A real-time perception system SAM 3: A step toward visual intelligence As AI continues to evolve, segmentation will

Introduction Object detection has undergone a remarkable transformation over the past decade. What began with handcrafted features and classical computer vision techniques has evolved into sophisticated deep learning systems capable of understanding complex visual environments. Models like YOLO, Faster R-CNN, and SSD pushed the boundaries of speed and accuracy, enabling real-world applications such as autonomous driving, smart surveillance, and industrial automation. However, as applications became more complex, the limitations of traditional convolutional neural networks (CNNs) became more apparent—particularly their difficulty in capturing long-range dependencies and global context within images. This challenge led to the rise of transformer-based architectures, which revolutionized natural language processing and soon made their way into computer vision. While transformers introduced a powerful way to model global relationships in images, early implementations like DETR struggled with slow inference speeds, making them impractical for real-time applications. This created a clear gap in the field: models were either fast or highly accurate—but rarely both. RT-DETR (Real-Time Detection Transformer) emerges as a solution to this problem. It represents a new generation of object detection models that successfully combines the global reasoning capabilities of transformers with the efficiency required for real-time performance. By rethinking the architecture and optimizing key components, RT-DETR makes transformer-based detection viable for real-world, time-sensitive applications. In this blog, we explore how RT-DETR works, what makes it unique, and why it is quickly becoming a cornerstone in modern computer vision systems What is RT-DETR? RT-DETR is a vision transformer-based object detection model designed for real-time applications. It builds on the DETR (Detection Transformer) framework but introduces optimizations that significantly improve inference speed. Unlike traditional detectors: It is end-to-end (no pipeline fragmentation) It eliminates Non-Maximum Suppression (NMS) It directly predicts final object detections RT-DETR was introduced in the paper: “DETRs Beat YOLOs on Real-time Object Detection” (2023) Why RT-DETR Matters RT-DETR bridges a long-standing gap in computer vision: Transformers → excellent global reasoning, but slow CNN detectors (like YOLO) → fast, but less contextual RT-DETR merges both worlds through a hybrid architecture, enabling: Real-time inference Strong accuracy Simplified deployment Key Features of RT-DETR 1. Real-Time Performance RT-DETR achieves real-time speeds while maintaining high detection accuracy. 2. End-to-End Detection (No NMS) No anchor boxes and no NMS means a simpler and faster pipeline. 3. Hybrid Encoder Design Combines CNN backbones with transformer attention mechanisms. 4. Efficient Attention (AIFI) Optimized attention reduces computational cost. 5. Query Selection Optimization Processes only the most relevant object queries. 6. Flexible Model Variants Includes scalable versions like RT-DETR-L and RT-DETR-X. How RT-DETR Works Feature extraction via CNN Hybrid encoding (CNN + Transformer) Object queries interact with features Predictions (class + bounding boxes) Direct output without NMS RT-DETR vs Other Object Detectors Model Speed Accuracy Pipeline Complexity YOLO Very Fast High Moderate Faster R-CNN Slow Very High High DETR Slow Very High High RT-DETR Fast Very High Low Advantages of RT-DETR Real-time transformer-based detection End-to-end architecture No NMS or anchor boxes Strong global context understanding Scalable and flexible Limitations Requires GPU for best performance Transformer components can be memory-intensive Still evolving compared to mature CNN models Use Cases Autonomous vehicles Surveillance systems Retail analytics Robotics Smart cities Citations and Acknowledgments Official Citation (BibTeX) @misc{lv2023detrs, title={DETRs Beat YOLOs on Real-time Object Detection}, author={Wenyu Lv and Shangliang Xu and Yian Zhao and Guanzhong Wang and Jinman Wei and Cheng Cui and Yuning Du and Qingqing Dang and Yi Liu}, year={2023}, eprint={2304.08069}, archivePrefix={arXiv}, primaryClass={cs.CV} } Acknowledgments RT-DETR was developed by Baidu and supported by the PaddlePaddle team, helping advance real-time transformer-based detection and making it accessible through frameworks like Ultralytics. Future of RT-DETR Edge-optimized lightweight models Better small-object detection Improved training efficiency Integration with multimodal AI systems Conclusion RT-DETR marks a significant milestone in the evolution of object detection. It demonstrates that the long-standing trade-off between speed and accuracy is no longer inevitable. By intelligently combining CNN-based feature extraction with transformer-based global reasoning, RT-DETR delivers a powerful, efficient, and streamlined detection framework. What truly sets RT-DETR apart is its end-to-end design philosophy. By eliminating the need for anchor boxes and post-processing steps like Non-Maximum Suppression, it simplifies the detection pipeline while maintaining high performance. This not only reduces computational overhead but also makes the model easier to deploy and scale across different environments. As industries increasingly rely on real-time visual intelligence—from autonomous vehicles navigating busy streets to smart cities analyzing live video feeds—the demand for models like RT-DETR will continue to grow. Its ability to process complex scenes quickly and accurately makes it a strong candidate for next-generation AI systems. Looking ahead, we can expect further advancements in transformer efficiency, edge deployment capabilities, and integration with multimodal AI systems. RT-DETR is not just an incremental improvement—it represents a shift toward more intelligent, efficient, and practical object detection models. For developers, researchers, and businesses alike, adopting RT-DETR means staying ahead in a rapidly evolving AI landscape. It’s more than just a model—it’s a glimpse into the future of computer vision, where speed, simplicity, and intelligence converge seamlessly. FAQ (Frequently Asked Questions) 1. What does RT-DETR stand for? RT-DETR stands for Real-Time Detection Transformer, a fast and accurate object detection model based on transformer architecture. 2. How is RT-DETR different from YOLO? RT-DETR uses transformers for global context and does not require NMS, while YOLO is CNN-based and relies on post-processing. RT-DETR aims to match YOLO’s speed with better contextual understanding. 3. Does RT-DETR require NMS? No. RT-DETR is an end-to-end model that eliminates the need for Non-Maximum Suppression. 4. Is RT-DETR suitable for real-time applications? Yes. RT-DETR is specifically designed for real-time inference, making it ideal for video analytics, robotics, and autonomous systems. 5. Who developed RT-DETR? RT-DETR was developed by Baidu with contributions from the PaddlePaddle research team. 6. What are RT-DETR model variants? Common variants include: RT-DETR-L (Large) RT-DETR-X (Extra Large) These provide different trade-offs between speed and accuracy. 7. Is RT-DETR better than DETR? Yes, in terms of speed. RT-DETR significantly improves inference time while maintaining similar accuracy. Visit Our Data Annotation Service Visit Now

Introduction Object detection has become one of the most important tasks in modern computer vision. From autonomous driving and medical imaging to surveillance systems and drone analytics, machines are increasingly expected to recognize objects in complex visual environments. However, while detecting large and clear objects has reached impressive accuracy levels, small object detection remains one of the most difficult problems in artificial intelligence. Small objects — such as distant pedestrians, tiny defects in manufacturing, or small tumors in medical scans — often occupy only a few pixels in an image. Despite their size, these objects frequently carry critical information. Missing them can lead to serious consequences, making small object detection an active and important research area. This article explores what small object detection is, why it is challenging, the techniques used to improve performance, real-world applications, and emerging trends shaping the future. What Is Small Object Detection? Small object detection refers to identifying and localizing objects that occupy a very small portion of an image. In many benchmarks, objects are categorized based on their pixel area: Small objects: typically < 32×32 pixels Medium objects: 32×32 to 96×96 pixels Large objects: > 96×96 pixels Unlike large objects, small objects contain limited visual information, making it harder for deep learning models to extract meaningful features. Examples include: Pedestrians far from a self-driving car Tiny vehicles in aerial imagery Micro-defects in industrial inspection Small animals in wildlife monitoring Lesions in medical scans Why Small Object Detection Is Difficult 1. Limited Visual Information Small objects contain fewer pixels, which means: Less texture Reduced shape details Higher sensitivity to noise Important visual cues may disappear during image processing. 2. Feature Loss During Downsampling Modern convolutional neural networks (CNNs) repeatedly reduce spatial resolution using pooling or strided convolutions. While this helps capture semantic information, it can completely eliminate small objects from deeper layers. 3. Class Imbalance Datasets often contain far more background pixels than small object pixels. Models may learn to prioritize larger or more dominant objects. 4. Occlusion and Clutter Small objects frequently appear: Partially hidden In dense scenes Against complex backgrounds This increases false positives and missed detections. 5. Scale Variation Objects may appear at vastly different sizes within the same image, making scale generalization difficult. Key Techniques for Small Object Detection Researchers and engineers have developed multiple strategies to address these challenges. 1. Feature Pyramid Networks (FPN) Feature Pyramid Networks combine features from multiple layers of a CNN: Shallow layers → high spatial resolution Deep layers → strong semantic information By merging both, models retain details necessary for detecting small objects. Benefits: Multi-scale feature representation Improved detection accuracy Widely adopted in modern detectors 2. Multi-Scale Training and Testing Images are resized to different scales during training. This allows models to learn objects appearing at various resolutions. Techniques include: Image pyramids Random resizing Scale jittering 3. Super-Resolution Techniques Super-resolution models enhance image quality before detection by increasing pixel density. Advantages: Recover fine details Improve feature extraction Boost performance in low-resolution scenarios 4. Attention Mechanisms Attention modules help networks focus on relevant regions. Examples: Spatial attention Channel attention Transformer-based attention These mechanisms guide the model toward subtle visual cues. 5. Contextual Information Modeling Small objects benefit heavily from surrounding context. For example: A tiny pedestrian is likely on a road. A small boat appears on water. Context-aware models analyze neighboring regions to improve predictions. 6. Anchor Optimization Traditional detectors use predefined anchor boxes. For small objects: Smaller anchors are introduced Anchor density is increased Adaptive anchor learning is applied This improves localization precision. 7. Transformer-Based Detection Vision transformers capture long-range dependencies across images. Advantages for small objects: Global context awareness Better feature relationships Reduced reliance on handcrafted anchors Examples include DETR-style architectures and hybrid CNN-transformer models. Popular Models Used for Small Object Detection Several architectures are commonly adapted or optimized for detecting small objects: YOLO variants (YOLOv5, YOLOv8 with small-scale tuning) Faster R-CNN + FPN RetinaNet EfficientDet DETR and Deformable DETR Each balances speed, accuracy, and computational cost differently. Real-World Applications Autonomous Driving Detecting distant pedestrians, traffic signs, and cyclists early improves safety and reaction time. Medical Imaging Small anomaly detection enables early disease diagnosis, including: Tumor detection Microcalcifications in mammograms Cellular analysis Aerial and Satellite Imaging Used for: Vehicle monitoring Disaster response Military surveillance Environmental tracking Industrial Inspection Factories rely on detecting tiny defects such as: Surface cracks Micro scratches Assembly errors Security and Surveillance Identifying suspicious objects or individuals at long distances enhances monitoring systems. Evaluation Metrics Small object detection is typically evaluated using: mAP (mean Average Precision) across object sizes AP_Small (COCO benchmark metric) Precision–Recall curves IoU (Intersection over Union) AP_Small specifically measures performance on small instances. Current Challenges Despite progress, several issues remain: High computational cost for multi-scale processing Sensitivity to image resolution Dataset limitations Real-time deployment constraints Generalization across environments Future Trends 1. Foundation Vision Models Large-scale pretrained vision models are improving generalization across object sizes. 2. Edge AI Optimization Efficient small-object detectors designed for drones, mobile devices, and IoT systems. 3. Better Data Augmentation Synthetic data and generative AI help create diverse small-object samples. 4. Hybrid CNN–Transformer Architectures Combining local feature extraction with global reasoning is becoming the dominant approach. 5. Self-Supervised Learning Reducing dependence on labeled datasets while improving robustness. Best Practices for Practitioners If you are building a small object detection system: ✅ Use higher input resolution✅ Apply feature pyramids✅ Tune anchor sizes carefully✅ Include contextual modeling✅ Use data augmentation heavily✅ Evaluate using AP_Small metrics✅ Balance speed vs accuracy requirements Best Practices for Practitioners If you are building a small object detection system: ✅ Use higher input resolution✅ Apply feature pyramids✅ Tune anchor sizes carefully✅ Include contextual modeling✅ Use data augmentation heavily✅ Evaluate using AP_Small metrics✅ Balance speed vs accuracy requirements Conclusion Small object detection represents one of the most challenging yet impactful areas of computer vision. While deep learning has significantly improved object detection overall, identifying tiny objects continues to demand specialized architectures, smarter training strategies, and better data handling. As transformer models, foundation vision