Introduction Artificial Intelligence has evolved rapidly over the past few years, transforming industries, workflows, and digital experiences. Among the most talked-about technologies today are AI Agents and Generative AI. While many people use these terms interchangeably, they represent two distinct categories of artificial intelligence with different purposes, capabilities, and business impacts. Generative AI became globally recognized through tools like OpenAI’s ChatGPT, image generators, and AI-powered content creation platforms. Meanwhile, AI agents are emerging as autonomous systems capable of reasoning, planning, decision-making, and executing tasks with minimal human intervention. Understanding the difference between AI agents and generative AI is essential for businesses, developers, and organizations looking to implement modern AI solutions effectively. In this comprehensive guide, we will explore: What generative AI is What AI agents are Core differences between the two Real-world applications Advantages and limitations How they work together Future trends shaping AI automation What Is Generative AI? Generative AI refers to artificial intelligence systems designed to create new content based on patterns learned from massive datasets. These systems generate outputs such as: Text Images Audio Videos Code Designs Popular examples include: OpenAI ChatGPT Google Gemini Anthropic Claude Midjourney Adobe Firefly Generative AI models rely heavily on deep learning architectures such as: Large Language Models (LLMs) Diffusion Models Transformer Networks Generative Adversarial Networks (GANs) These systems predict the next word, pixel, sound, or pattern based on training data. How Generative AI Works Generative AI models are trained using enormous datasets containing billions of examples. During training, the AI learns: Language structures Semantic relationships Visual patterns Coding syntax User behavior patterns For example, a text-based generative AI model predicts the most likely next word in a sentence. If a user asks: “Write a marketing email for a SaaS product” The AI generates content based on statistical patterns learned during training. Main Features of Generative AI 1. Content Creation Generative AI excels at producing: Blog articles Social media posts Product descriptions Images Marketing campaigns Source code 2. Human-Like Responses Modern LLMs simulate conversational interactions with impressive fluency. 3. Creativity Enhancement Generative AI supports brainstorming, ideation, and design generation. 4. Fast Output Generation Tasks that once took hours can now be completed in seconds. 5. Multimodal Capabilities Many advanced models process: Text Images Audio Video simultaneously What Are AI Agents? AI agents are autonomous systems that can: Observe environments Analyze situations Make decisions Plan actions Execute tasks Learn from feedback Unlike generative AI, which primarily creates content, AI agents are designed to act independently toward achieving goals. AI agents can integrate: LLMs APIs Databases Software tools Automation workflows Memory systems Their primary objective is task execution rather than content generation alone. How AI Agents Work AI agents typically operate using a loop: Observe Reason Plan Act Evaluate Repeat For example, an AI customer support agent may: Read incoming tickets Categorize requests Search company databases Draft responses Escalate complex issues Update CRM systems All with minimal human intervention. Core Components of AI Agents 1. Reasoning Engine Determines what actions to take. 2. Memory Stores previous interactions and context. 3. Planning System Breaks goals into smaller executable steps. 4. Tool Integration Uses external software, APIs, and applications. 5. Autonomous Decision-Making Acts independently based on objectives. AI Agents vs Generative AI: Key Differences AI Agents vs Generative AI Comparison of major capabilities between AI agents and generative AI systems. Feature Generative AI AI Agents Primary Purpose Content generation Autonomous task execution Human Dependency High Lower Memory Limited Persistent memory possible Decision-Making Minimal Advanced Tool Usage Usually standalone Integrates tools & APIs Workflow Automation Limited Extensive Autonomy Reactive Proactive Goal-Oriented Sometimes Strongly goal-driven Real-World Examples of Generative AI Content Marketing Businesses use generative AI for: SEO blogs Email campaigns Ad copy Product descriptions Software Development AI coding assistants generate: Code snippets Documentation Bug fixes Test cases Examples include: GitHub Copilot OpenAI Codex Design and Media AI-generated visuals, videos, and audio are transforming creative industries. Customer Support Chatbots powered by generative AI answer customer questions in natural language. Real-World Examples of AI Agents Autonomous Customer Support Agents AI agents can: Resolve tickets Access databases Trigger workflows Schedule follow-ups AI Research Agents Agents gather information from multiple sources and summarize findings automatically. Sales Automation Agents AI agents can: Qualify leads Send outreach emails Update CRMs Schedule meetings Software Engineering Agents Advanced coding agents can: Write code Run tests Debug applications Deploy software Benefits of Generative AI Increased Productivity Teams generate content significantly faster. Lower Operational Costs Automation reduces manual creative workloads. Enhanced Creativity AI assists with ideation and innovation. Scalability Businesses can produce content at scale. Limitations of Generative AI Hallucinations Generative AI may create inaccurate or fabricated information. Lack of True Understanding Models predict patterns rather than truly understanding concepts. Limited Autonomy Most generative AI systems require prompts and human supervision. Context Limitations Long-term memory is often weak or unavailable. Benefits of AI Agents End-to-End Automation AI agents execute complete workflows autonomously. Continuous Learning Agents can improve through feedback and interaction. Operational Efficiency Businesses reduce repetitive manual tasks. Intelligent Decision-Making Agents analyze data and optimize outcomes. Limitations of AI Agents Complexity Building robust AI agents is technically challenging. Security Risks Autonomous systems require strong governance and safeguards. Infrastructure Requirements AI agents often require: APIs Databases Orchestration systems Monitoring frameworks Reliability Concerns Poorly designed agents may make incorrect decisions. How AI Agents and Generative AI Work Together In reality, many advanced AI systems combine both technologies. Generative AI often acts as the “brain” for AI agents by providing: Natural language understanding Content generation Reasoning support Meanwhile, AI agents provide: Autonomy Planning Action execution Workflow management For example: An AI agent receives a customer support request Uses generative AI to draft a response Accesses databases Updates support tickets Sends emails automatically This combination is driving the next wave of intelligent automation. Industries Adopting AI Agents and Generative AI Healthcare Hospitals use AI for: Medical documentation Diagnostic assistance Patient support automation Finance Banks deploy AI for: Fraud detection Financial analysis Customer service automation E-Commerce Retailers use AI for:

Introduction Data collection has become one of the most critical components of artificial intelligence, business intelligence, automation, and digital transformation. Organizations today rely heavily on accurate, scalable, and real-time data to train machine learning models, optimize operations, understand customer behavior, and make informed decisions. However, traditional data collection methods often involve significant manual effort, high operational costs, inconsistent quality, and long turnaround times. This is where Agent AI is changing the landscape. Agent AI, also known as Agentic AI, refers to intelligent systems capable of acting autonomously to complete tasks, make decisions, communicate with systems, and continuously improve workflows. Unlike traditional automation tools that follow static instructions, AI agents can analyze environments, understand goals, adapt to changing conditions, and collaborate with other agents or humans. When applied to data collection, Agent AI creates powerful opportunities for businesses across industries. AI agents can gather structured and unstructured data from multiple sources, validate information, organize datasets, monitor quality, automate labeling tasks, interact with APIs, scrape public information responsibly, conduct surveys, process multimedia content, and even coordinate crowdsourcing operations. From healthcare and retail to automotive, finance, agriculture, education, and smart cities, companies are adopting AI agents to improve efficiency, accelerate data pipelines, and reduce operational bottlenecks. In this comprehensive guide, we will explore how to use Agent AI for data collection, including: What Agent AI is Why Agent AI matters in modern data collection Core components of AI-driven data collection systems Step-by-step implementation process Best tools and technologies Industry use cases Challenges and ethical considerations Best practices for scalable deployment Future trends in agentic AI systems Whether you are a startup, enterprise, AI developer, researcher, or AI data solutions provider, this guide will help you understand how Agent AI can transform the way you collect and manage data. What is Agent AI? Agent AI refers to autonomous software systems designed to achieve goals with minimal human intervention. These systems can reason, plan, communicate, learn, and execute tasks dynamically. Unlike traditional rule-based automation, Agent AI systems are adaptive. They can: Analyze objectives Break tasks into smaller subtasks Interact with external systems Make decisions based on context Learn from outcomes Optimize workflows continuously An AI agent can operate independently or as part of a multi-agent ecosystem where several intelligent agents collaborate to achieve larger objectives. Core Characteristics of Agent AI 1. Autonomy AI agents can execute tasks without constant human supervision. 2. Goal-Oriented Behavior Agents work toward achieving defined objectives. 3. Context Awareness AI agents understand contextual information and adapt their actions accordingly. 4. Decision-Making Capability They evaluate options and select the best course of action. 5. Learning Ability Many AI agents improve over time using machine learning and reinforcement learning. 6. Communication AI agents can communicate with APIs, databases, cloud systems, and even humans. Understanding Data Collection in the AI Era Data collection involves gathering information from various sources for analysis, machine learning, reporting, or operational purposes. Modern organizations collect multiple types of data, including: Text data Audio recordings Video footage Images Sensor data LiDAR data Geospatial data Medical data Customer interactions Social media content Transactional data IoT device information The explosion of digital information has made manual collection methods increasingly inefficient. Challenges of Traditional Data Collection Traditional methods often face several limitations: Time Consumption Manual collection and annotation require extensive human labor. Scalability Issues Large-scale projects become difficult to manage. Data Quality Problems Human errors can reduce consistency. High Costs Enterprises spend significant budgets on workforce management. Delayed Insights Slow collection delays business decisions. Limited Real-Time Capability Manual systems cannot efficiently handle real-time streams. Agent AI addresses these limitations by introducing intelligent automation into every stage of the data lifecycle. Why Use Agent AI for Data Collection? Agent AI provides transformative benefits for modern enterprises. 1. Automation at Scale AI agents can process massive amounts of data simultaneously across multiple platforms. For example: Scraping websites Monitoring sensors Collecting IoT streams Organizing cloud storage Extracting structured information from documents 2. Faster Data Pipelines Agent AI dramatically reduces data collection time. Tasks that previously took weeks can now be completed in hours. 3. Improved Data Accuracy AI agents use validation rules, anomaly detection, and quality checks to improve consistency. 4. Real-Time Data Collection AI agents can continuously monitor live systems and instantly collect incoming information. This is especially valuable for: Financial trading Smart cities Autonomous vehicles Healthcare monitoring Cybersecurity systems 5. Reduced Operational Costs Organizations can reduce manual labor costs while improving efficiency. 6. Intelligent Decision-Making AI agents can decide which data sources are relevant and prioritize high-value information. 7. Multi-Source Integration Agents can combine data from: APIs Databases Sensors Web applications Cloud systems Mobile apps Enterprise platforms How Agent AI Works in Data Collection Agent AI systems follow an intelligent workflow. Step 1: Define Objectives The organization defines goals such as: Collect customer reviews Monitor traffic data Gather medical images Build training datasets Analyze user behavior Step 2: Task Planning The AI agent breaks the objective into smaller tasks. For example: Identify sources Access databases Extract data Clean records Validate quality Store results Step 3: Source Identification The agent identifies appropriate data sources. These may include: Public websites APIs Enterprise databases IoT devices Cloud systems Video feeds Annotation platforms Step 4: Data Extraction The agent gathers information automatically. Methods include: API integration Web scraping Sensor communication OCR extraction Speech recognition Video processing Step 5: Data Cleaning The AI agent removes: Duplicates Corrupted records Missing values Invalid formats Step 6: Data Validation Agents verify quality using: Statistical analysis Pattern recognition Rule-based checks Human review workflows Step 7: Storage and Organization Collected data is organized into: Databases Cloud storage Data lakes AI training repositories Step 8: Continuous Learning AI agents analyze performance and improve future collection strategies. Types of Agent AI Used for Data Collection 1. Web Scraping Agents These agents gather information from websites. Use cases include: Market research Price monitoring Competitor analysis News aggregation 2. Conversational AI Agents Chatbots and voice assistants collect customer information. Examples: Customer support interactions Survey automation User feedback

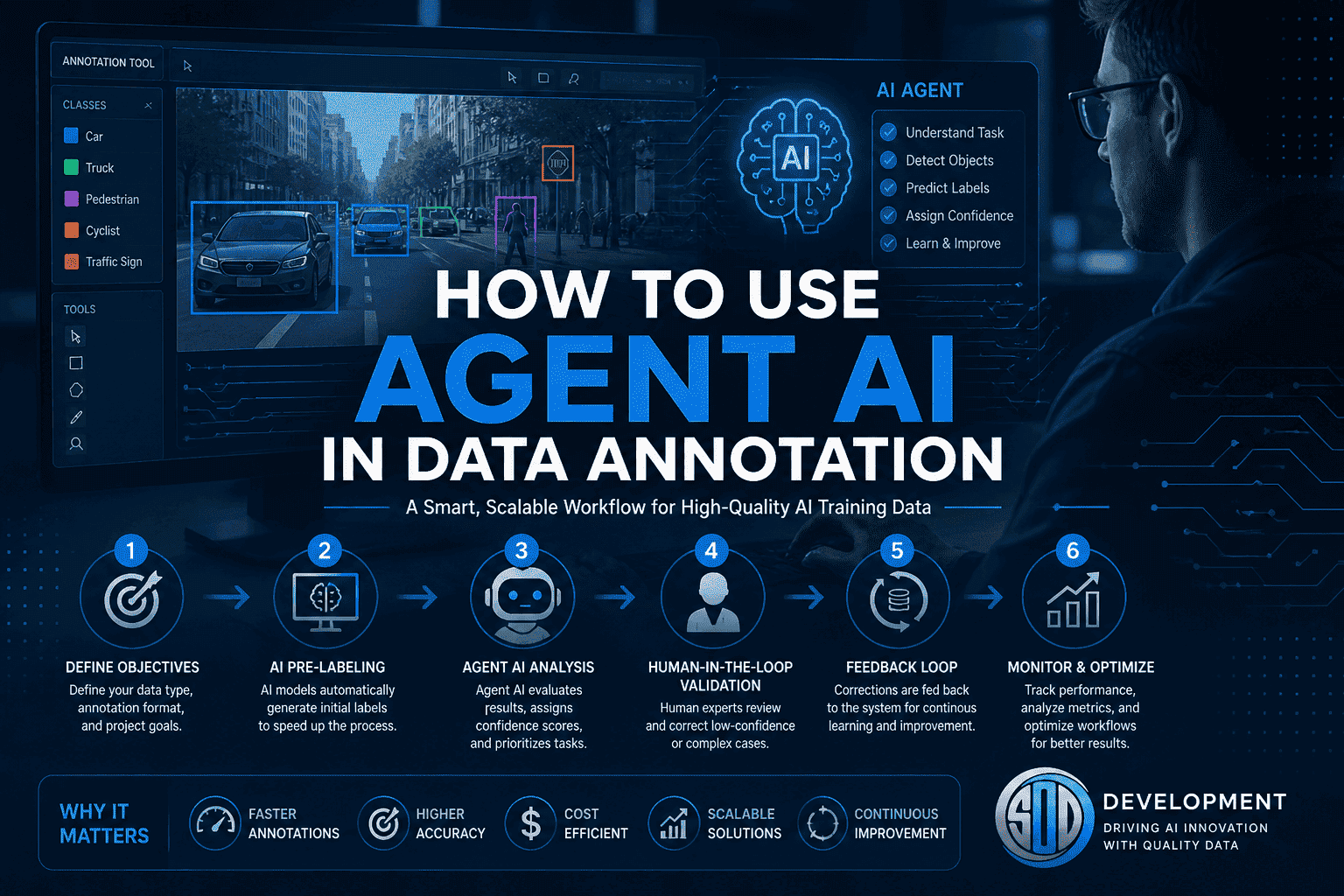

Introduction Data annotation has long been the backbone of artificial intelligence. Whether you’re building computer vision systems, training large language models, or developing autonomous vehicles, high-quality labeled data is non-negotiable. But traditional annotation methods—manual labeling, rigid workflows, and heavy human dependency—are no longer sufficient to meet today’s scale and complexity. Enter Agent AI. Agent AI is transforming how data annotation is performed by introducing autonomous, semi-autonomous, and collaborative AI systems that can plan, reason, and execute annotation tasks with minimal human intervention. Instead of simply labeling data, AI agents can now understand context, make decisions, and continuously improve. This blog explores how to use Agent AI in data annotation, including architecture, workflows, tools, benefits, challenges, and real-world use cases. What is Agent AI? Agent AI refers to intelligent systems designed to perform tasks autonomously by: Perceiving data (images, text, audio, video) Making decisions based on context Executing actions (labeling, validating, correcting) Learning from feedback Unlike traditional machine learning models, Agent AI systems are: Goal-oriented Context-aware Capable of multi-step reasoning Interactive with humans and other agents These agents are often powered by large language models (LLMs), computer vision models, and reinforcement learning. Why Agent AI Matters in Data Annotation Traditional annotation challenges include: High cost and time consumption Human inconsistency and bias Difficulty scaling to millions of data points Complex multi-modal data handling Agent AI solves these by: Automating repetitive tasks Improving labeling consistency Reducing turnaround time Enabling dynamic and adaptive workflows Core Components of Agent AI Annotation Systems To effectively use Agent AI in data annotation, you need to understand its architecture: 1. Perception Layer This includes models that process raw data: Computer vision models (for images/videos) Speech recognition (for audio) NLP models (for text) 2. Reasoning Engine This is where the “agent” becomes intelligent: LLM-based reasoning (e.g., task interpretation) Rule-based systems Context-aware decision-making 3. Action Module Executes annotation tasks: Bounding boxes Semantic segmentation Text classification Named entity recognition (NER) 4. Memory and Feedback Loop Stores previous annotations Learns from corrections Improves over time 5. Human-in-the-Loop Interface Humans validate edge cases Provide feedback Handle ambiguity How to Use Agent AI in Data Annotation (Step-by-Step) Step 1: Define Annotation Objectives Start by clearly defining: Type of data (image, text, audio, video) Annotation format (bounding boxes, polygons, tags, transcripts) Quality requirements (accuracy thresholds) Example: Annotating medical images for tumor detection Labeling customer sentiment in chat data Step 2: Select the Right AI Models Choose models based on your data: Computer Vision → YOLO, SAM, Detectron NLP → Transformer-based models (LLMs) Audio → Whisper-like models These models act as the foundation for your agent system. Step 3: Design the Agent Workflow Instead of a linear pipeline, Agent AI uses dynamic workflows: Example Workflow: Agent reads task instructions Pre-labeling model generates initial annotations Agent evaluates confidence score If confidence is high → accept If low → send to human reviewer Agent learns from corrections Step 4: Implement Multi-Agent Collaboration You can use multiple agents for different roles: Annotation Agent → Labels data Validation Agent → Checks quality Correction Agent → Fixes errors Supervisor Agent → Manages workflow This modular approach improves scalability and accuracy. Step 5: Integrate Human-in-the-Loop Even the best agents need human oversight. Use humans for: Edge cases Ambiguous data Quality audits Best practice: Only escalate low-confidence cases to humans Continuously retrain agents using human feedback Step 6: Build Feedback and Learning Loops Agent AI systems improve over time through: Reinforcement learning Active learning Continuous fine-tuning Example:If a human corrects a bounding box, the agent stores this correction and updates its future predictions. Step 7: Monitor and Optimize Performance Track key metrics: Annotation accuracy Speed (labels/hour) Cost per annotation Human intervention rate Use dashboards and analytics to continuously refine your system. Real-World Use Cases 1. Autonomous Driving Annotating LiDAR and video data Agents handle object detection and tracking Humans validate rare scenarios 2. Healthcare AI Labeling medical images Extracting clinical entities from text Ensuring compliance and precision 3. E-commerce Product categorization Image tagging Customer sentiment analysis 4. Conversational AI Intent classification Entity extraction Dialogue annotation Tools and Platforms for Agent AI Annotation Popular tools include: CVAT Labelbox Supervisely Roboflow These platforms can be extended with Agent AI capabilities using APIs and LLM integrations. Benefits of Using Agent AI in Annotation 1. Scalability Handle millions of data points efficiently. 2. Cost Reduction Reduce reliance on large annotation teams. 3. Speed Accelerate project timelines significantly. 4. Consistency Minimize human variability. 5. Continuous Improvement Agents learn and improve with time. Challenges and Limitations Despite its advantages, Agent AI comes with challenges: 1. Initial Setup Complexity Designing agent workflows requires expertise. 2. Model Bias Agents may inherit biases from training data. 3. Quality Control Over-reliance on automation can reduce accuracy if not monitored. 4. Data Privacy Sensitive data requires strict governance. Best Practices To successfully implement Agent AI: Start with pilot projects Use hybrid human-AI workflows Focus on high-impact use cases first Continuously evaluate performance Invest in training and infrastructure Future of Agent AI in Data Annotation The future is moving toward: Fully autonomous annotation systems Multi-modal agents handling text, image, and video together Self-improving pipelines with minimal human intervention Integration with real-time AI systems Agent AI will not replace humans—but will augment human capabilities, making annotation faster, smarter, and more scalable. How SO Development Can Help At SO Development, we specialize in advanced AI data solutions, including: Agent AI-powered annotation workflows Large-scale data collection and labeling Multi-modal annotation (LiDAR, image, text, audio) Custom AI pipeline development With over 600+ projects and expert annotators, we combine human expertise with intelligent automation to deliver high-quality datasets for your AI models. Conclusion Agent AI is redefining data annotation by introducing intelligence, autonomy, and adaptability into the process. By combining machine efficiency with human judgment, organizations can achieve faster, cheaper, and more accurate annotation at scale. If you’re looking to stay competitive in the AI space, adopting Agent AI in your annotation workflow is no longer optional—it’s essential. Frequently Asked Questions (FAQ) 1. What is Agent AI in

Introduction Artificial Intelligence has rapidly evolved over the past decade. Initially, most systems were designed as single-agent models, where one AI handled a specific task—classification, prediction, or automation. But real-world problems are rarely that simple. Modern challenges—like global logistics, autonomous driving, financial markets, and climate systems—require multiple decision-makers operating simultaneously. This is where multi-agent systems (MAS) come in. Rather than relying on a single “super-intelligence,” MAS distributes intelligence across multiple autonomous agents that interact, collaborate, and adapt in real time. This shift represents one of the most important transformations in AI: From isolated intelligence → to collaborative intelligence. What Are Multi-Agent Systems? A multi-agent system is a collection of independent computational entities—called agents—that operate within a shared environment. Each agent: Has its own goals or objectives Perceives the environment Makes decisions independently Interacts with other agents These agents can: Cooperate Compete Coexist with partial alignment The overall system behavior emerges from these interactions, often producing outcomes more sophisticated than any single agent could achieve. The Core Concept: Emergence One of the defining features of MAS is emergent behavior. This means: The system exhibits intelligence at a higher level than individual agents Complex patterns arise from simple rules Examples: Ant colonies organizing without central control Traffic flow optimization through decentralized signals Market dynamics driven by independent traders In AI, emergence allows systems to: Solve problems dynamically Adapt without centralized oversight Scale efficiently Key Components of Multi-Agent Systems 1. Agents Agents are the building blocks of MAS. They can vary widely in complexity: Types of Agents: Reactive agents – respond to stimuli without memory Deliberative agents – plan actions based on internal models Learning agents – improve over time using data Hybrid agents – combine multiple approaches Each agent typically includes: Sensors (input) Actuators (output) Decision-making logic Knowledge base 2. Environment The environment is where agents operate. Types of Environments: Physical (robots, drones) Digital (software systems, simulations) Hybrid (IoT systems combining both) Environment properties: Static vs dynamic Deterministic vs stochastic Fully observable vs partially observable 3. Communication Agents must exchange information to function effectively. Communication Methods: Message passing Shared memory APIs Event-driven systems Protocols: Structured languages (ACL – Agent Communication Language) Negotiation protocols Auction mechanisms 4. Coordination Mechanisms Coordination ensures agents work efficiently together. Common approaches: Task allocation Consensus algorithms Market-based coordination Rule-based systems 5. Decision-Making Models Agents use various strategies: Rule-based systems Optimization algorithms Machine learning models Reinforcement learning Types of Multi-Agent Systems 1. Cooperative Systems Agents share a common goal. Example: Warehouse robots working together to fulfill orders. Key Features: Shared rewards High communication Strong coordination 2. Competitive Systems Agents have conflicting objectives. Example: Algorithmic trading bots competing in financial markets. Key Features: Strategic behavior Game theory Limited information sharing 3. Mixed Systems Most real-world systems fall into this category. Example: Ride-sharing platforms: Drivers cooperate with the system Compete with each other 4. Hierarchical Systems Agents are organized in layers. Structure: High-level agents (decision-makers) Low-level agents (executors) 5. Swarm Intelligence Systems Inspired by nature (ants, bees, birds). Characteristics: Simple agents No central control Emergent coordination Architectures of Multi-Agent Systems Centralized vs Decentralized Centralized: One controller coordinates agents Easier to manage Less scalable Decentralized: No central authority Agents act independently Highly scalable and robust Distributed Architecture Agents are distributed across networks. Benefits: Fault tolerance Parallel processing Geographic scalability Hybrid Architecture Combines centralized and decentralized approaches. Algorithms Used in Multi-Agent Systems 1. Game Theory Used in competitive environments. Concepts: Nash equilibrium Zero-sum games Strategy optimization 2. Reinforcement Learning (Multi-Agent RL) Agents learn through interaction. Types: Cooperative RL Competitive RL Self-play 3. Consensus Algorithms Used for agreement among agents. Examples: Voting mechanisms Distributed consensus 4. Auction Algorithms Agents bid for tasks or resources. Applications: Logistics Cloud computing 5. Evolutionary Algorithms Agents evolve strategies over time. Real-World Applications 1. Autonomous Vehicles Cars act as agents: Communicate with each other Share traffic data Prevent accidents Future: Fully coordinated traffic ecosystems 2. Smart Cities Agents manage: Traffic lights Energy consumption Waste systems 3. Healthcare Systems Applications: Patient monitoring agents Diagnostic assistants Resource allocation 4. Finance and Trading Agents: Analyze market data Execute trades Manage risk 5. Supply Chain and Logistics Agents represent: Suppliers Warehouses Delivery routes Outcome: Optimized delivery Reduced costs 6. Robotics and Swarms Examples: Drone fleets Agricultural robots Disaster response 7. Gaming and Simulation NPCs behave independently, creating realistic worlds. 8. Cybersecurity Agents: Detect threats Respond autonomously Adapt to new attacks Challenges of Multi-Agent Systems 1. Coordination Complexity As agents increase, interactions grow exponentially. 2. Communication Overhead Too much messaging slows performance. 3. Conflict Resolution Agents may: Compete for resources Have conflicting goals 4. Security Risks Distributed systems are vulnerable to: Attacks Data breaches 5. Debugging and Testing Hard to trace: Emergent behavior System-wide bugs 6. Ethical Concerns Questions arise: Who is responsible for decisions? How to ensure fairness? Multi-Agent Systems vs Single-Agent AI Key Differences Aspect Single-Agent Multi-Agent Intelligence Centralized Distributed Complexity Lower Higher Scalability Limited High Flexibility Moderate High Resilience Low High Multi-Agent Systems + Large Language Models A major breakthrough is combining MAS with advanced AI models. Example: Each agent: Has a specialized role Uses language models to communicate Use Cases: AI research assistants Automated business workflows Coding agents collaborating Conclusion Agentic AI represents a fundamental evolution in artificial intelligence — shifting from tools that respond to prompts toward systems that pursue goals. The transformation happens through architecture, not magic. By applying five key design patterns: Planner–Executor Tool Use Memory Augmentation Reflection Multi-Agent Collaboration developers can turn LLMs into reliable, capable AI agents. The future of AI isn’t just smarter models — it’s smarter systems. FAQ What is Agentic AI in simple terms? Agentic AI refers to AI systems that can independently plan and execute tasks to achieve goals rather than only responding to prompts. How is Agentic AI different from chatbots? Chatbots generate responses. Agentic AI systems take actions, use tools, remember context, and iteratively work toward outcomes. Do AI agents replace humans? No. Most agentic systems are designed to augment human workflows by automating repetitive or complex tasks

Introduction Artificial intelligence is undergoing a major shift. For the past few years, large language models (LLMs) have primarily acted as responsive tools — systems that generate answers when prompted. But a new paradigm is emerging: Agentic AI. Instead of simply responding, AI systems are now able to plan, decide, act, and iterate toward goals. These systems are called AI agents, and they represent one of the most important transitions in modern software design. In this article, we’ll explain what Agentic AI is, why it matters, and the five core design patterns that turn LLMs into capable AI agents. What Is Agentic AI? Agentic AI refers to AI systems that can independently pursue objectives by combining reasoning, memory, tools, and decision-making workflows. Unlike traditional chat-based AI, an agentic system can: Understand a goal instead of a single prompt Break tasks into steps Choose actions dynamically Use external tools and data Evaluate results and improve outcomes In simple terms: A chatbot answers questions. An AI agent completes tasks. Agentic AI transforms LLMs from passive generators into active problem-solvers. Why Agentic AI Matters The shift toward agent-based systems unlocks entirely new capabilities: Automated research assistants Software development agents Autonomous customer support workflows Data analysis pipelines Personal productivity copilots Organizations are moving from prompt engineering to system design, where success depends less on clever prompts and more on architecture. That architecture is built using repeatable design patterns. The Five Design Patterns for Agentic AI 1. The Planner–Executor Pattern Core idea: Separate thinking from doing. The agent first creates a plan, then executes actions step by step. How it works: Interpret user goal Generate task plan Execute each step Adjust based on results Why it matters Reduces hallucinations Improves reliability Enables long-running tasks Example use cases Research agents Coding assistants Multi-step automation workflows 2. Tool-Using Agent Pattern Core idea: LLMs become powerful when connected to tools. Instead of relying only on internal knowledge, agents call external systems such as: APIs databases search engines calculators internal company services Agent loop: Reason about next action Select tool Execute tool call Interpret output Key insight:LLMs provide reasoning; tools provide precision. This pattern turns AI from a text generator into a functional system operator. 3. Memory-Augmented Agent Pattern Core idea: Agents need memory to improve over time. Without memory, every interaction resets context. Agentic systems introduce structured memory layers: Short-term memory: conversation context Long-term memory: stored knowledge Working memory: active task state Benefits Personalization continuity across sessions improved decision-making Memory enables agents to behave less like chat sessions and more like collaborators. 4. Reflection and Self-Critique Pattern Core idea: Agents improve by evaluating their own outputs. After completing an action, the agent asks: Did this achieve the goal? What errors occurred? Should I retry differently? This creates an iterative improvement loop. Typical workflow Generate solution Critique result Revise approach Produce improved output Why it matters Higher accuracy fewer logical failures better reasoning chains Reflection transforms single-pass AI into adaptive intelligence. 5. Multi-Agent Collaboration Pattern Core idea: Multiple specialized agents outperform one general agent. Instead of a single system doing everything, responsibilities are divided: Planner agent Research agent Writer agent Reviewer agent Executor agent Agents communicate and coordinate toward shared goals. Advantages specialization improves quality scalable workflows modular architecture This mirrors how human teams operate — and often produces more reliable outcomes. How These Patterns Work Together Most real-world agentic systems combine several patterns: Capability Design Pattern Task decomposition Planner–Executor External actions Tool Use Learning over time Memory Quality improvement Reflection Scalability Multi-Agent Systems Agentic AI is not one technique — it’s a composition of coordinated behaviors. Agentic AI Architecture (Conceptual Stack) A typical AI agent system includes: LLM reasoning layer – understanding and planning Orchestration layer – workflow control Tool layer – APIs and integrations Memory layer – persistent knowledge Evaluation loop – reflection and monitoring Designing agents is therefore closer to systems engineering than prompt writing. Challenges of Agentic AI Despite its promise, Agentic AI introduces new complexities: Latency from multi-step reasoning cost management for long workflows safety and permission boundaries evaluation and debugging difficulties orchestration reliability Successful implementations focus on constrained autonomy rather than unlimited freedom. Risks: Trust Without Ground Truth The normalization of synthetic authority introduces several societal risks: Erosion of shared reality — communities may inhabit different perceived truths. Manipulation at scale — political and commercial persuasion becomes cheaper and more targeted. Institutional distrust — genuine sources struggle to distinguish themselves from synthetic competitors. Cognitive fatigue — constant skepticism exhausts audiences, leading to disengagement or blind acceptance. The danger is not that people believe everything, but that they stop believing anything reliably. Best Practices for Building AI Agents Start with narrow goals Add tools gradually Log agent decisions Implement guardrails early Separate planning from execution Measure outcomes, not responses The most effective agents are designed systems, not improvisations. The Future of Agentic AI Agentic AI is rapidly becoming the foundation of next-generation software. We are moving toward systems that: manage workflows autonomously collaborate with humans continuously adapt through feedback loops operate across digital environments Just as web apps defined the 2000s and mobile apps defined the 2010s, AI agents may define the next era of computing. Conclusion Agentic AI represents a fundamental evolution in artificial intelligence — shifting from tools that respond to prompts toward systems that pursue goals. The transformation happens through architecture, not magic. By applying five key design patterns: Planner–Executor Tool Use Memory Augmentation Reflection Multi-Agent Collaboration developers can turn LLMs into reliable, capable AI agents. The future of AI isn’t just smarter models — it’s smarter systems. FAQ What is Agentic AI in simple terms? Agentic AI refers to AI systems that can independently plan and execute tasks to achieve goals rather than only responding to prompts. How is Agentic AI different from chatbots? Chatbots generate responses. Agentic AI systems take actions, use tools, remember context, and iteratively work toward outcomes. Do AI agents replace humans? No. Most agentic systems are designed to augment human workflows by automating repetitive