Introduction

Artificial intelligence is getting bigger every year. Modern Large Language Models (LLMs) like Llama, Qwen, and GPT-style models often contain tens of billions of parameters, usually requiring expensive GPUs with massive VRAM. For most developers, startups, and researchers, running these models locally feels impossible.

But a new tool called oLLM is quietly changing that.

Imagine running models as large as 80B parameters on a consumer GPU with just 8GB of VRAM. Sounds unrealistic, right? Yet that’s exactly what oLLM enables through clever engineering and smart memory management.

In this article, we’ll explore what oLLM is, how it works, and why it may become the secret ingredient for running massive AI models on tiny hardware.

What is oLLM?

oLLM is a lightweight Python library designed for large-context LLM inference on resource-limited hardware. It builds on top of popular frameworks like Hugging Face Transformers and PyTorch, allowing developers to run large AI models locally without requiring enterprise-grade GPUs.

The key idea behind oLLM is simple:

Instead of forcing everything into GPU memory, intelligently move parts of the model to other storage layers.

With this approach, models that normally need hundreds of gigabytes of VRAM can run on standard consumer hardware.

For example, some setups allow models such as:

Llama-3 style models

GPT-OSS-20B

Qwen-Next-80B

to run on a machine with only 8GB GPU VRAM plus SSD storage.

The Problem with Running Large AI Models

Traditional AI inference assumes one thing:

All model weights must fit inside GPU memory.

This becomes a huge bottleneck because:

| Model Size | Typical VRAM Needed |

|---|---|

| 7B | ~16 GB |

| 13B | ~24 GB |

| 70B | ~140 GB |

| 80B | ~190 GB |

Clearly, that’s far beyond what most consumer GPUs can handle.

Even developers with powerful GPUs often rely on quantization, which compresses model weights to reduce memory usage.

But quantization comes with trade-offs:

Reduced accuracy

Lower output quality

Compatibility limitations

oLLM takes a different approach.

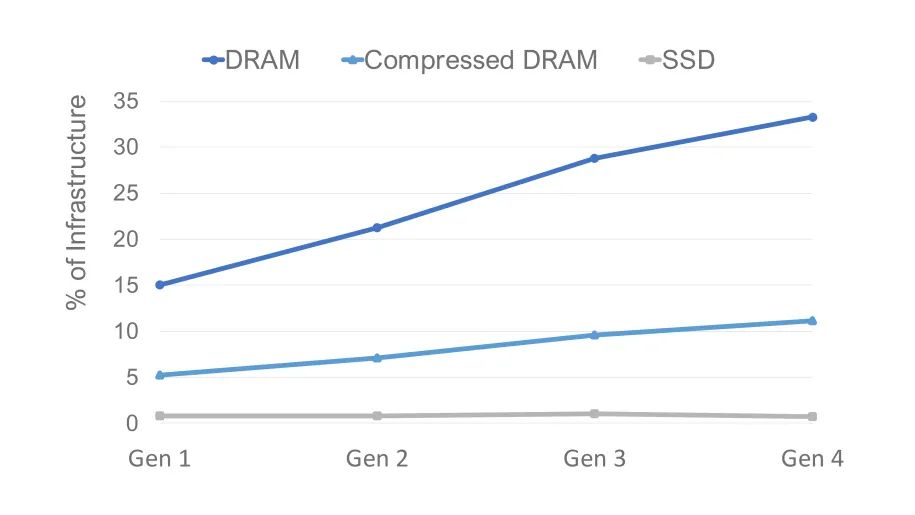

The Core Innovation: SSD Offloading

The breakthrough behind oLLM is SSD-based memory offloading.

Instead of loading the entire model into GPU memory, oLLM streams model components dynamically between:

GPU VRAM

System RAM

High-speed SSD

This means your GPU only holds the active parts of the model at any given time.

The technique allows models to run that are 10x larger than the available GPU memory.

Think of it like this:

Traditional AI

oLLM

By turning storage into an extension of GPU memory, oLLM bypasses the biggest limitation in local AI development.

No Quantization Needed

Another major advantage of oLLM is that it does not require quantization.

Instead of compressing model weights, it keeps them in high precision formats such as FP16 or BF16, preserving the original model quality.

That means:

Better reasoning quality

More accurate outputs

More reliable responses

For developers working on research, compliance analysis, or long-document reasoning, this can make a huge difference.

Ultra-Long Context Windows

Many AI tools struggle with large documents because of context limits.

oLLM supports extremely long context windows — up to 100,000 tokens.

This allows the model to process:

Entire books

Long research papers

Legal contracts

Massive log files

Large datasets

—all in a single prompt.

This opens the door for advanced offline tasks like:

document intelligence

compliance auditing

enterprise knowledge search

AI-assisted research

Performance Trade-offs

Of course, running massive models on small hardware has trade-offs.

Since parts of the model are constantly streamed from storage, speed can be slower than running everything in VRAM.

For example:

Large models may generate around 0.5 tokens per second on consumer GPUs.

That might sound slow, but it’s perfectly acceptable for offline workloads, such as:

document analysis

research tasks

batch processing

AI pipelines

In many cases, cost savings outweigh the speed limitations.

Multimodal Capabilities

oLLM is not limited to text models.

It can also support multimodal AI systems, including models that process:

text + audio

text + images

Examples include models like:

Voxtral-Small-24B (audio + text)

Gemma-3-12B (image + text)

This allows developers to build advanced AI applications that combine multiple data types.

Why oLLM Matters for the Future of AI

AI is currently dominated by cloud infrastructure and billion-dollar GPU clusters.

But tools like oLLM represent a shift toward democratized AI infrastructure.

Instead of needing:

expensive GPUs

massive cloud budgets

specialized infrastructure

developers can experiment with powerful models on regular hardware.

This unlocks new opportunities for:

indie developers

startups

academic researchers

privacy-focused applications

Local AI and Privacy

Running AI locally also has a major benefit:

privacy.

When models run on your own machine:

no data leaves your system

no prompts are logged

sensitive documents remain private

This is especially valuable for industries like:

healthcare

finance

legal services

government

Use Cases for oLLM

Some real-world applications include:

Research assistants

Analyze entire research papers or datasets locally.

Legal document analysis

Process massive contracts and legal records with long context windows.

Offline AI pipelines

Run batch inference jobs without relying on cloud services.

Privacy-focused AI tools

Keep sensitive data completely local.

Developer experimentation

Test large models without investing in expensive hardware.

Limitations to Know

While impressive, oLLM isn’t perfect.

Current limitations include:

Slower inference compared to full-VRAM setups

Heavy SSD usage

Limited compatibility with some hardware (like certain Apple Silicon setups)

However, these are common trade-offs in early infrastructure tools.

As storage speeds and optimization techniques improve, performance will likely get better.

The Bigger Trend: AI on Everyday Devices

oLLM is part of a larger shift toward local AI computing.

We are moving from:

Cloud-only AI → Hybrid AI → Fully local AI

Future devices may run powerful AI models directly on:

laptops

smartphones

edge devices

IoT hardware

This transformation will make AI more accessible, private, and decentralized.

Final Thoughts

oLLM proves something important:

You don’t always need a $10,000 GPU server to run powerful AI.

Through clever memory management, SSD streaming, and high-precision inference, oLLM enables developers to run massive AI models on surprisingly small hardware.

For AI enthusiasts, researchers, and builders, this is an exciting step toward a future where anyone can run advanced AI locally.

And that might be the real secret sauce.

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Ut elit tellus, luctus nec ullamcorper mattis, pulvinar dapibus leo.