Introduction

Computer vision has come a long way, but high-performing AI models often come with a catch: they’re huge, resource-hungry, and impractical for mobile devices. The original Segment Anything Model (SAM) broke ground in universal image segmentation, yet its massive size made real-time, on-device use nearly impossible.

In this series, we explore Mobile Segment Anything (MobileSAM) — a lightweight, mobile-ready adaptation that brings powerful segmentation to smartphones, embedded systems, and edge devices. MobileSAM keeps the precision and flexibility of SAM while dramatically reducing computational demands, opening the door to real-time AI applications wherever you need them.

From mobile photo editing to augmented reality, robotics, and even healthcare imaging, MobileSAM makes it possible to run sophisticated image segmentation directly on-device — fast, efficient, and without sacrificing privacy. In short, it’s AI vision, untethered.

What Is MobileSAM?

MobileSAM is a lightweight adaptation of the Segment Anything Model (SAM) designed to perform image segmentation with significantly reduced computational requirements.

Image segmentation is the process of identifying and separating objects within an image at the pixel level. Instead of simply detecting objects, segmentation precisely outlines them.

MobileSAM achieves this while maintaining strong accuracy but drastically improving speed and efficiency.

Key Idea

Replace heavy components of SAM with a compact encoder architecture while keeping the powerful segmentation capability intact.

The result:

- Faster inference

- Lower memory usage

- Mobile compatibility

- Near-SAM performance

Why MobileSAM Was Created

The original SAM model introduced a universal segmentation approach capable of understanding almost any visual object. However, it required:

- High GPU power

- Large memory capacity

- Server-level hardware

This limited real-world deployment.

MobileSAM was developed to solve three major challenges:

- Edge deployment

- Real-time performance

- Energy efficiency

Now segmentation can run directly on devices instead of relying on cloud processing.

How MobileSAM Works

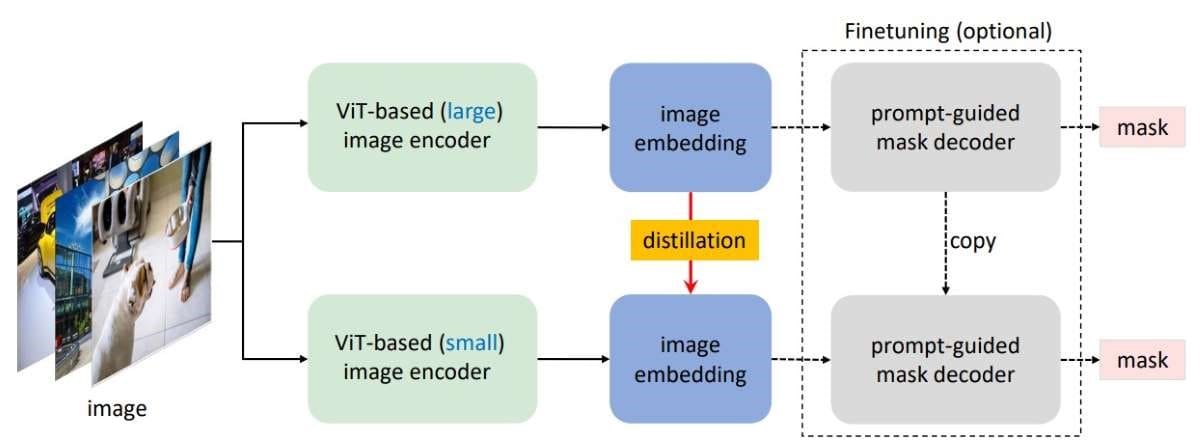

MobileSAM keeps SAM’s general pipeline but optimizes the architecture.

1. Lightweight Image Encoder

The main improvement lies in replacing SAM’s large Vision Transformer encoder with a smaller, mobile-friendly backbone.

Benefits:

- Reduced parameters

- Faster computation

- Lower latency

2. Prompt-Based Segmentation

Like SAM, MobileSAM accepts prompts such as:

- Points

- Bounding boxes

- Masks

- Text guidance (via integrations)

Users can interactively guide segmentation results.

3. Efficient Mask Decoder

The decoder remains similar to SAM, preserving segmentation quality while benefiting from the faster encoder.

Key Features of MobileSAM

Real-Time Performance

MobileSAM runs significantly faster than traditional segmentation models, enabling live applications.

Mobile & Edge Ready

Designed for:

- Smartphones

- AR/VR devices

- Robotics systems

- IoT cameras

General-Purpose Segmentation

Works across diverse categories without retraining.

Energy Efficient

Lower computational demand means better battery performance.

MobileSAM vs Original SAM

| Feature | SAM | MobileSAM |

|---|---|---|

| Model Size | Very Large | Lightweight |

| Hardware Needs | GPU Required | Mobile Compatible |

| Speed | Moderate | Very Fast |

| Edge Deployment | Limited | Excellent |

| Accuracy | Extremely High | Near-Comparable |

MobileSAM trades a small amount of accuracy for massive gains in usability and speed.

Real-World Use Cases

1. Mobile Photo Editing Apps

Instant background removal and object selection directly on-device.

2. Augmented Reality (AR)

Real-time object segmentation improves immersive AR experiences.

3. Robotics

Robots can understand environments locally without cloud dependence.

4. Autonomous Systems

Drones and smart vehicles benefit from lightweight perception models.

5. Healthcare Imaging

Portable medical devices can analyze visuals offline.

Advantages of On-Device Segmentation

Running segmentation locally provides major benefits:

- Privacy protection (no cloud upload)

- Reduced latency

- Offline functionality

- Lower operational cost

- Improved responsiveness

MobileSAM aligns perfectly with the growing trend of edge AI computing.

Performance and Efficiency

MobileSAM achieves:

- Dramatically reduced model size

- Faster inference speeds

- Comparable segmentation quality to SAM

- Lower power consumption

This balance makes it practical for commercial applications where performance and efficiency must coexist.

Developer Benefits

Developers adopting MobileSAM gain:

- Easier deployment pipelines

- Reduced infrastructure costs

- Cross-platform compatibility

- Real-time interaction capabilities

It integrates well with frameworks such as:

- PyTorch

- ONNX

- Mobile AI runtimes

Challenges and Limitations

Despite its advantages, MobileSAM still has trade-offs:

- Slight accuracy reduction compared to full SAM

- Performance varies across hardware

- Complex scenes may still require larger models

However, ongoing optimization continues to close these gaps.